Thursday, May 21, 2026

Making Multi-Camera ReID work when mistakes cascade

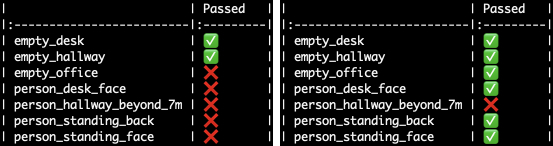

Multi-Camera person ReID looks like an extension of face ID, but the signal is weaker, so every mistake compounds. Here's how we still achieved our accuracy goals.

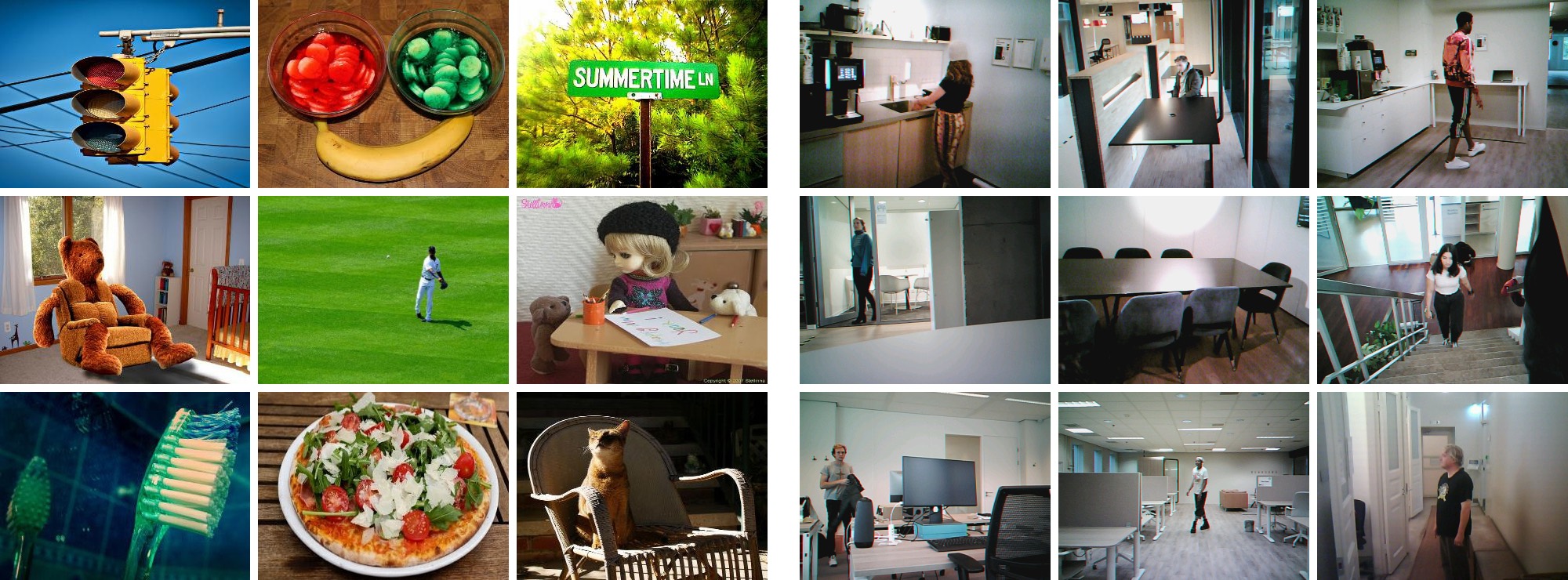

Multi-Camera Re-Identification (ReID) recognizes the same person across different cameras and viewpoints without relying on face recognition. In smart home security cameras, it solves notification overload: instead of getting a separate alert every time the same person is detected again, the system groups those events into one. In enterprise security, it enables tracking individuals across a camera network and supports tasks like loitering detection, where you need to know that the person who has been lingering near a side entrance is the same one who was seen at the parking lot fifteen minutes ago.

In the video below, a gardener mowing the lawn triggers 34 push notifications in less than an hour on a multi-camera setup without ReID. With ReID, those repeated sightings of the same person collapse into a single event.

The setup

At Plumerai we previously built the automatic enrollment mode of FFID, our Familiar Face Identification system. FFID uses an end-to-end deep learning pipeline of three neural networks for detection, face representation, and face matching. We covered the technical details in our tinyML talk, and later expanded it with automatic enrollment. The core task is the same in both automatic FFID and Multi-Camera person ReID: automatically enroll previously unseen individuals and reliably match them when they reappear. Both systems do this by mapping image crops through an embedding network into a high-dimensional space, where crops of the same person should cluster together and crops of different people should sit far apart.

Faces are exceptional identity inputs: geometrically normalized and information-dense. Person appearance (clothing, build) is none of those things. Outfits change, and the same clothing can look entirely different from the front, the side, or the back. Identity clusters in appearance embedding space are broader, fuzzier, and more likely to overlap. This gap doesn’t fully close even as the embedder improves. It is an inherent property of the input signal. To build an accurate ReID system, we had to significantly expand our processing pipeline and improve every component in it.

Why this matters: errors cascade

Mistakes in a ReID pipeline are not independent. A tracking error that bundles embeddings from two different people corrupts the representation, which causes a wrong enrollment decision, which pollutes the gallery and causes future matching errors. In this context, “gallery” refers to the database of stored identity representations that the system matches new observations against. Each mistake seeds more. In face ID the margin is generous enough that the system usually self-corrects. In ReID, the weaker signal means the same cascade runs further before it’s caught.

In face ID, a mistake is mostly an isolated setback. In Multi-Camera person ReID, a mistake is the beginning of a chain of mistakes. That is what set the bar for everything we built.

Here is how we approached the problem, and what we had to get right. Eight components, one at a time.

Building an identity representation

A single embedding from a single frame is too noisy to act on. The goal is to aggregate observations over time into a compact, representative description of a person, one robust to any single bad crop. Getting that aggregation right required solving five things.

Component 1: Detecting people

Before we can embed crops of people, we need to find them in the video stream. This is where our object detectors and our advanced motion detection come in. A person that was never detected in the first place can’t be re-identified. A crop that includes multiple people, or only part of a person, will cause degraded embeddings. Our people detection is both accurate and efficient, setting the rest of the pipeline up for success. Our advanced motion detection allows our detector to spend its compute where it matters, ensuring that even an efficient detector won’t miss people walking by in the distance.

Component 2: The embedder

The next component is the embedding network: the model that maps a person crop into a point in high-dimensional space. A better embedder produces tighter clusters, which results in easier decisions everywhere downstream.

To create an embedder that was both highly efficient and highly accurate, we optimized every stage of the training process.

We invested heavily in assembling relevant training data rather than relying on off-the-shelf datasets. We also built specialized ReID labeling tools to make this job both efficient and accurate, since the volume of data required and the subtlety of appearance-based identity made general-purpose annotation workflows insufficient.

We trained with a mix of several losses, one of which we carefully designed to encourage the embedder to encode input quality into the embedding (which is helpful downstream, see Component 4). We optimized the architecture (through neural architecture search) to be accurate even on a tiny compute budget. We optimized our knowledge distillation process to preserve the input-quality information of the embeddings (in addition to preserving the embedding space structure of the teacher with a fraction of the compute). Our embedder training pipeline consists of several stages, with different datasets and objectives, which all together produce a model that is both accurate and efficient.

The challenge for ReID is that person appearance offers none of the structure that makes face embedders easy to train: viewpoints vary wildly, scale changes, and clothing shifts dramatically under different lighting. There are no clean geometric constraints to exploit. As a result, even a very good ReID embedder produces appearance clusters that remain inherently broader than face clusters. A better ReID embedder narrows the gap but doesn’t close it. The remaining ambiguity between overlapping clusters still had to be absorbed by every downstream component. That realization is what shaped the rest of the system.

Component 3: Short-term tracking

Before you can aggregate embeddings, you need to know which ones belong together. Short-term tracking does this by running a multi-object tracker across consecutive frames and associating detections into per-person tracklets. When the tracker is correct, every embedding in a track comes from the same person. When it makes a mistake (swapping boxes during an occlusion, or continuing a track past an entry or exit), the aggregated representation silently starts describing a mix of two different people.

In face ID, these errors surface quickly: face embeddings from different people are distinctive enough that the mismatch is hard to miss. In ReID, similar clothing or build can make two people’s embeddings close enough that a tracking error goes unnoticed much longer. To make ReID work we had to roughly halve our tracker’s identity-switch rate compared to what FFID needed, combining location, appearance, detection metadata, and more in an optimal way.

The hardest part of tracking is data association: deciding, frame by frame, which detection belongs to which track. Classical approaches rely on hand-tuned heuristics or fixed distance thresholds. We trained our data association policy with reinforcement learning, letting the system learn from experience which assignment decisions lead to the best long-term tracking accuracy rather than optimizing each frame in isolation. The result was not just fewer stolen tracklets, but also much longer and more consistent tracking.

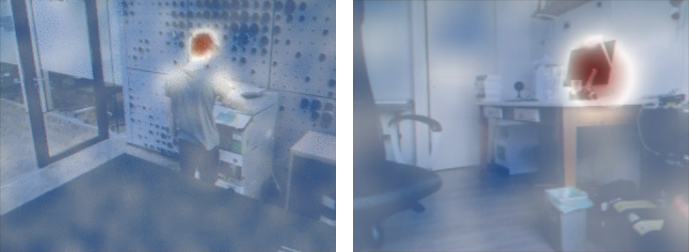

Component 4: Embedding quality estimation

Even within a clean track, not all embeddings are worth keeping. A blurry thumbnail, a person half-obscured by a doorframe, a crop taken mid-motion at the edge of the frame: these can land far from where they should be in the identity space, and admitting them degrades the aggregated representation. A single bad embedding that slips through can tip a borderline aggregation toward the wrong cluster, so we had to work hard to keep them out.

Detecting poor crops required real research. We used the learned quality estimation we trained into the embedder together with additional signals from our pipeline to emphasize the best crops per track. This helps us get high-quality aggregated embeddings, even when many of the individual crops include multiple people, partial people, or degraded image quality. It made the difference between a system that stayed accurate and one that drifted.

Component 5: Embedding sub-sampling

Even after quality filtering, there’s a subtler problem: the remaining embeddings are not independent and identically distributed (IID). Consecutive frames share the same lighting, the same angle, the same partial occlusion. When samples are correlated like this, averaging them doesn’t reduce variance the way it would for independent observations. It just encodes whatever systematic bias happens to be present right now.

The fix is to select for diversity rather than take everything. An embedding earns a place in the stored set only if it is both high-quality and meaningfully different from what’s already there. This builds a spread-out sample of the person’s appearance distribution instead of a dense clump of near-identical frames from one viewpoint, and diversity-aware aggregation turned out to be one of the more important things we got right.

Matching and gallery management

Once a robust identity representation has been built, it feeds into two further decisions that determine the overall accuracy of the system.

Component 6: Matching vs new enrollment

For each new observation of sufficient quality, the system faces a binary choice: known person, or someone new? Both directions of error matter. A false match corrupts the wrong person’s stored identity. A false enrollment fragments a known person across multiple gallery entries. Either way, future observations are matched against a gallery that’s less accurate than it was before, setting us up for more errors and an even further degraded gallery.

Calibrating this choice correctly is crucial for ReID, as mistakes on either side of the decision will start to snowball.

Component 7: Gallery health management

Even a well-tuned system makes mistakes. Some are obvious and self-correcting. Others are subtle: a match that just cleared the threshold, a near-duplicate enrollment that slipped past the filter. These accumulate quietly. Left unaddressed, a gallery that starts clean gradually becomes one full of fragmented identities, silently merged entries, and stale records.

Keeping a gallery healthy means actively monitoring for corruption and correcting it: consolidating fragments and pruning entries. In ReID, the higher error rate upstream means gallery maintenance isn’t optional housekeeping. It’s a core part of the system.

Putting it all together

This post primarily covers the ML and algorithmic side of Multi-Camera ReID, but making it work also required substantial system-level software engineering.

Component 8: System-level integration

To operate reliably across multiple cameras and deployment environments, the system had to keep galleries synchronized across cameras, integrate ReID with FFID, expose a clean API for on-device, on-prem, cloud, and hybrid deployments, and keep compute and memory usage low enough for consumer-priced cameras and cost-sensitive cloud infrastructure. Those are stories for another time.

Every component has to earn it

Person appearance embeddings are inherently harder to cluster than face embeddings: broader, fuzzier, more likely to overlap. Because errors cascade, there is very little slack for any component to be merely good enough. A weak tracker corrupts the aggregation. A leaky quality filter corrupts the representation. A poorly calibrated enrollment decision corrupts the gallery. And a gallery that isn’t actively maintained degrades over time, taking accuracy with it.

The only way to build a ReID system that stays accurate, even over longer periods of time, is to make each component genuinely good. That took serious research and engineering, and it’s what made all the difference.

Wednesday, April 8, 2026

Running modern AI on a 1985 Atari ST

Plumerai People: Cedric's side project of running modern software on ancient hardware.

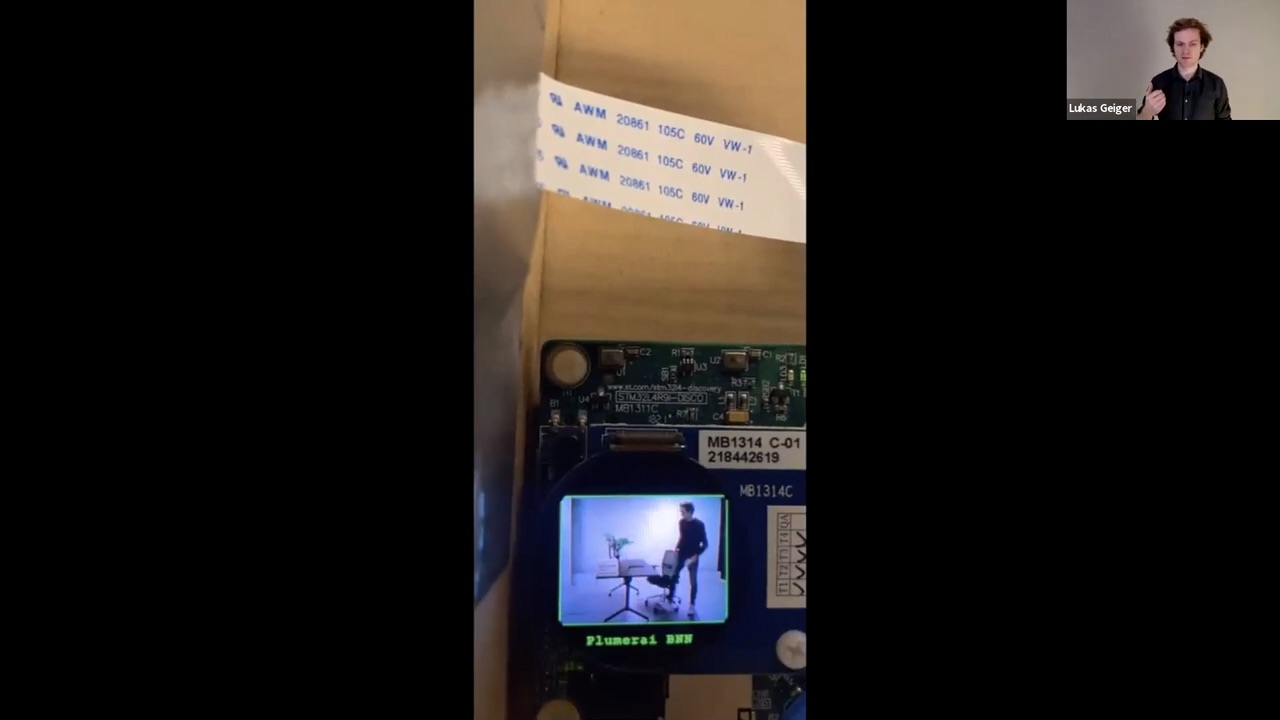

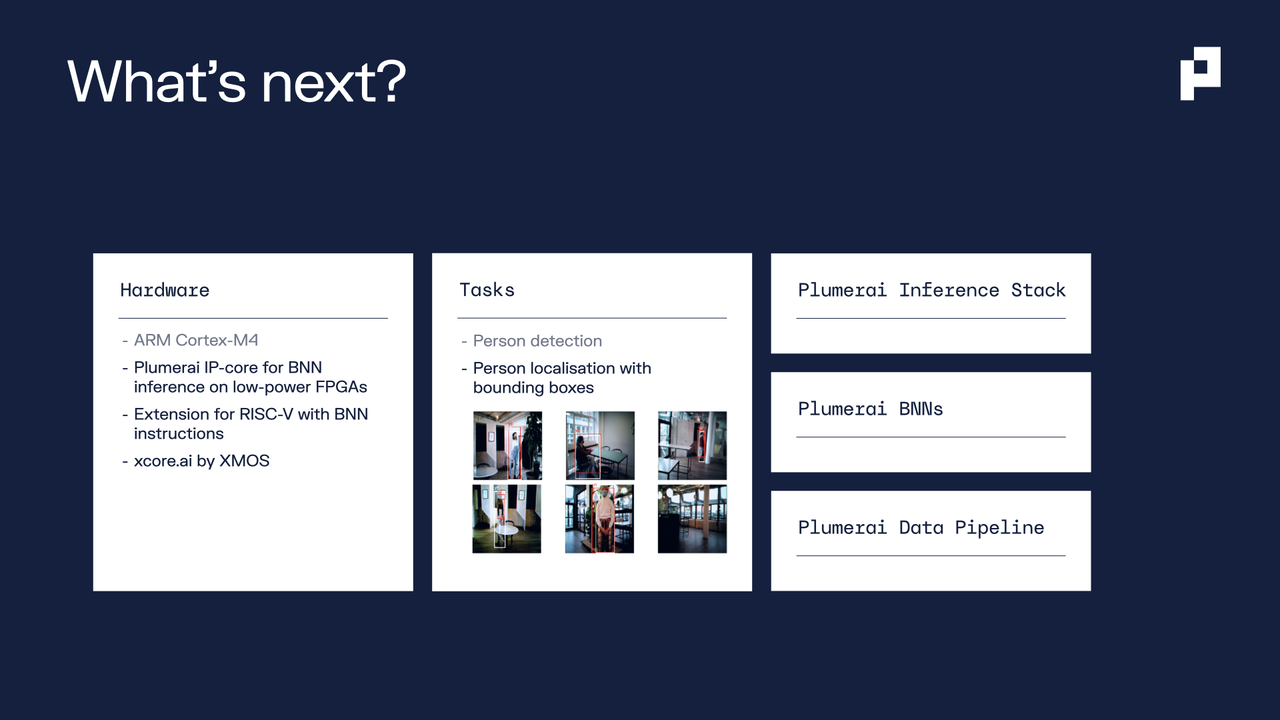

One of our engineers, Cedric Nugteren, recently set himself an unusual challenge: running modern AI on a computer from 1985. In the video below, Cedric introduces the Atari ST, a system he first programmed as a 6-year old, and revisits it as a hobby project decades later. He demonstrates his MidiSurf game, and shows how he successfully ported Plumerai People Detection to the Atari ST. Despite the hardware’s age and constraints, the system is able to run real inference of a convolutional neural network.

This project is a fun example of what happens when curiosity meets efficiency: pushing modern AI to run on unconventional, highly constrained devices is exactly the kind of challenge we enjoy tackling at Plumerai.

Apply here if you want to work with Cedric and other amazing AI engineers and researchers.

Thursday, April 2, 2026

Multi-Camera Re-Identification solves notification overload

Your kids are playing basketball on the driveway. You’re packing your car before a trip. Or, as in the video below, your gardener is mowing the lawn and triggers 34(!) push notifications on your phone in less than an hour.

Notification overload is the number one annoyance for smart home camera users. It’s the key reason many users have only one camera deployed at home. Because more cameras generate even more useless notifications.

We built Plumerai Multi-Camera Re-Identification to solve this. Familiar Face Identification in itself is not sufficient - even though Plumerai’s version is highly accurate - simply because faces are not visible in most videos. That’s why Plumerai Multi-Camera Re-Identification analyzes people’s non-facial appearance, such as clothing and hairstyle, to re-identify people from any angle.

It’s small and fast enough so that it can run on-device for most cameras. And since it doesn’t rely on privacy-sensitive biometric data, our re-identification can be deployed anywhere.

Our researchers and engineers have worked on this technology for years. We’ve trained it on large amounts of video data and packed it with our unique Tiny AI and reinforcement learning technology. And now we’re ready to bring Plumerai Multi-Camera Re-Identification to the world. A world without notification overload.

Contact

Contact us for more information.

Thursday, March 19, 2026

Plumerai Advanced Motion Detection

The unsung first step in on-device video intelligence.

Motion detection is one of the most common tasks for smart home cameras: determining whether something is moving in the scene and if so, where. It can be useful on its own, triggering a notification or starting a recording, but it also plays an important role as the first stage of a larger video intelligence pipeline. Algorithms such as people detection and face identification can operate without it, but they perform better when motion detection indicates where they should focus.

Despite being conceptually simple, getting motion detection right in practice is surprisingly difficult. Getting it right on a device with limited compute is harder still.

Advanced Motion Detection applied to a simple scene. The red squares indicate where the algorithm detected motion, on a configurable grid.

More than just alerts

The obvious use of motion detection is triggering notifications, but that is only part of the story. In practice, motion detection serves several roles in a camera system:

Filtering PIR false positives. Many battery-powered cameras use a passive infrared (PIR) sensor as a wake-up trigger. While PIR sensors are cheap and draw almost no power, they are not very precise: a warm gust of air or a sun-heated surface can trigger them. When the PIR fires, the camera wakes up and starts the video pipeline. Advanced motion detection then acts as a second opinion: if it confirms there is no relevant motion, the camera can go back to sleep within milliseconds, saving significant battery life.

Starting and stopping clips. Motion detection determines when to begin and end a recording clip. Without it, the camera would either record continuously (expensive in storage and bandwidth) or rely solely on the PIR sensor (which cannot tell you when the event is over). Accurate motion boundaries mean clips that start just before the action and end shortly after, rather than cutting off too early or running for minutes after the scene is empty.

Guiding the rest of the pipeline. Motion detection output is more than a binary “something moved” signal: it produces a spatial map of where motion is happening in the frame. Downstream algorithms such as people detection and familiar face identification use this map to focus their attention on the regions that matter, reducing both false positives and compute cost.

Zone-based events. The spatial motion map can also be reused to monitor specific regions of the scene. Users can define zones, such as a doorway or driveway, and trigger events only when motion overlaps with those areas, reducing nuisance alerts from irrelevant parts of the frame.

Why it is hard

At first glance, motion detection seems straightforward: compare the current frame to a background model and flag what changed. That is essentially what a simple background-subtraction algorithm does, and it works well in a controlled indoor environment with stable lighting.

Outdoors, things fall apart quickly. Rain and snow create thousands of small pixel changes per frame. Wind causes trees and bushes to sway constantly. A cloud passing over the sun can shift the brightness of half the image in an instant. At night, the camera’s own IR illuminator lights up tiny airborne dust particles that are invisible to the naked eye. Each of these phenomena looks like “motion” to a naive algorithm, but none of them are relevant for security.

Below are side-by-side comparisons with Plumerai on the left and a conventional background-subtraction algorithm on the right, demonstrating that the advanced Plumerai algorithm handles all these potential problems.

Rain

The Plumerai algorithm ignores both the falling rain and the splashing of raindrops on the puddle. Motion is only reported for the moving car and motorbike.

Snow

Heavy snowfall, even when illuminated by the camera’s IR light, does not trigger any motion.

Lighting changes

When cloud cover suddenly blocks the sunlight, large parts of the scene change brightness. A naive algorithm sees a huge difference between the current frame and the background model and reports motion everywhere. The Plumerai algorithm recognizes that the lighting has changed but the underlying texture has not, and continues to report motion only for the moving person.

IR-illuminated dust

At night, a camera’s IR light can illuminate tiny airborne dust particles that are invisible to the human eye. These particles appear as bright, rapidly moving dots, and they are a common source of false triggers. The Plumerai algorithm specifically suppresses this type of motion.

Wind

Trees and plants moving in the wind are perhaps the single most common source of false alerts in outdoor cameras. The Plumerai algorithm recognizes the dynamic and repetitive nature of their movement and does not report it as motion.

Running on tiny devices

Motion detection runs on every frame, so it has to be fast. On the kind of low-cost, battery-powered cameras where it matters most, “fast” means “with almost no resources.”

Plumerai Advanced Motion Detection requires only a few kilobytes of ROM for code. RAM usage scales with input resolution: using only 243 KB at 640x480 or 693 KB at 1280x720. On an Arm Cortex-M3, it runs at 10 FPS while consuming less than 10% of the CPU. It also runs on x86, Aarch64, and can take advantage of NPU accelerators.

Another important design choice: the algorithm does not require a warm-up period. Many motion detection approaches need a sequence of initial frames without motion to build a background model. That is a problem for PIR-triggered cameras, which wake up precisely because something is already happening. Plumerai’s algorithm can start detecting from the very first frame.

And for users already running other parts of the Plumerai Video Intelligence suite, such as People Detection, Familiar Face Identification, or our VLMs, motion detection comes at no additional memory or compute cost. It is already running as part of the pipeline.

A quiet backbone of camera intelligence

Motion detection rarely gets much attention in discussions about camera AI. But it quietly influences how well everything else works.

If the motion signal is noisy, the entire pipeline becomes inefficient: batteries drain faster, clips are poorly timed, and higher-level AI wastes compute on irrelevant parts of the frame. If the motion signal is reliable, the whole system becomes better and more efficient.

That is why we treat motion detection not as a simple feature, but as an important foundation of our video intelligence pipeline.

More information is available in our documentation.

Monday, March 16, 2026

Not-quite-ivory towers

Plumerai People: Alex reflects on his transition from academia to industry

by Alex Chebykin

“How to make the damn thing work?” is the key question guiding most engineers. To answer it, reality has to be faced head-on. As you test your ideas against it, you get to watch again and again “the slaying of a beautiful hypothesis by an ugly fact”. Incidentally, this is the phrase Thomas Huxley used to describe “the great tragedy of Science”. This tragedy is clearly shared with engineering.

But any tragedy - metaphorical or literal - can also bring improvement, as brilliant people work together to dispel the darkness. In science, this has been the role of academia as an institution and a community of communities devoted to collaborative search for truth.

It is no secret that the academia of yore is gone. Bureaucrats and careerists have infiltrated the ivory tower, which remains standing only thanks to the valiant efforts of true believers. I’m grateful to have done my PhD at a lab where integrity is taken seriously and good science is being done and taught - which definitely left a positive mark on me. Still, not every PhD student is as lucky as I, and so finding honest work amid derivative papers and misleading claims is a disheartening endeavor.

During the first year of my PhD, I wasted time and effort engaging with results from other labs that never should have reached anyone’s eyes - or left the authors’ fingertips. I grew ever more skeptical (anyone who knew me before would be surprised this was even possible!) and disillusioned with the prospects of academia-driven science beyond the walls of (unfortunately) not-too-common good labs.

As an engineer at heart, I’m interested in building things that work and improve the world, so after submitting my thesis, I sought to switch to industry. Of course, industry is no fairy tale, with some companies peddling hot air and some working hard to usher in the apocalypse. Still, it is possible to find a place where reality is faced and then molded to become more accommodating to humans.

When I joined Plumerai last December, I expected to work on a concrete, useful application - and so I do. What I didn’t expect was that, at the heart of the company, there is a little tower of academia-as-I-wish-it-were. Where positive and negative results are written up with no pretense, and the formulaic rigidity of mainstream scientific writing gives way to brevity and humor. Where the tools used to cut the building blocks of a solution are the most applicable ones - even if they are not the fanciest or the most novel.

In short, I found a group of people shaping a part of reality together, building a little tower of our own knowledge. While I find myself happy to be lending a hand, it irks me that the wider world cannot learn about the imaginative designs developed here (building a tower does require resources and therefore secrets) - and that the culture of honest inquiry is spread across separate towers like this one, each built away from others.

I believe that a more collaborative way can be achieved, with innovation shared more broadly and efforts aligned to speed up the building process for everyone. Although shaping institutions is not my calling, I’m convinced it is a job that needs doing. I’m delighted that people stronger than me put their hours and their sweat into trying to revamp the large ivory tower. I salute you as I turn back to our plainer tower and heave another stone on top. It may not be as fancy as ivory but it gets the job done - and the elephants will surely thank us.

(Image source: Unsplash)

Tuesday, March 10, 2026

Meet Plumerai at ISC West 2026

Attending ISC West?

At ISC West, we’re showing how Plumerai combines tiny AI models with powerful LLMs to deliver better AI for cameras, NVRs, and VMS platforms, enabling new capabilities such as natural-language video search and other advanced AI features.

Our customers integrate the Plumerai software to ship faster, more accurate, and more efficient AI features across their product lines, while reducing cloud compute costs by 10x or more. Our neural networks are highly efficient and can run directly on the camera, on-prem, or in the cloud. When deployed in the cloud, they require far less compute than alternatives, significantly reducing cloud infrastructure costs.

If you’re building AI video analytics and want to see what’s next, let’s meet. We’re setting up meetings in advance.

📍 Booth 34111

📅 Schedule a meeting in advance

🚀 See our latest demos

See you there!

Tuesday, September 16, 2025

Plumerai raises $8.7M Series A to connect Vision LLMs to trillions of edge devices

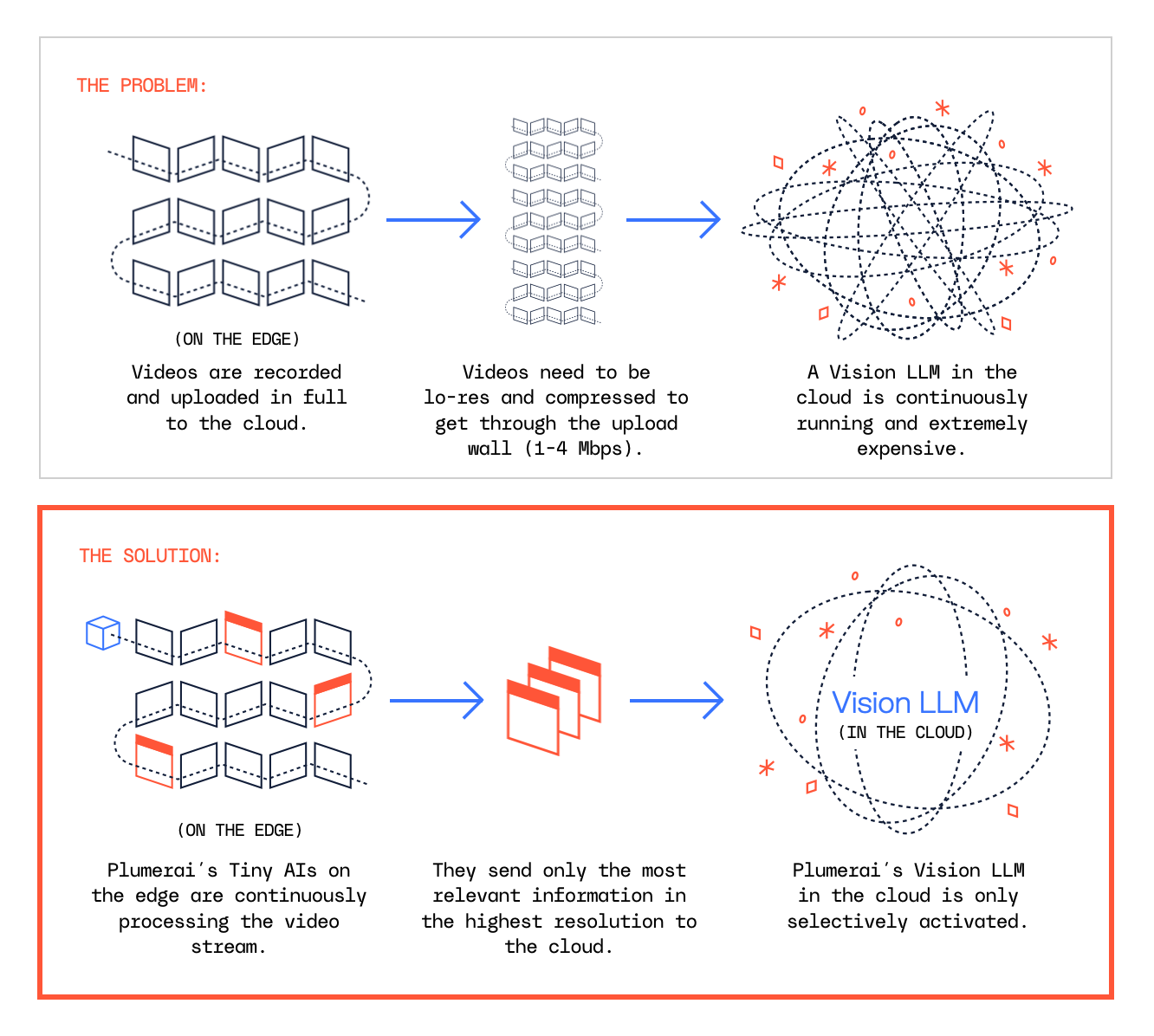

Plumerai launches its first Vision LLM-powered features, enabled by its unique combination of Tiny AI on the edge to direct Vision LLMs in the cloud

Press release

London – September 16, 2025 - Plumerai™, a pioneer in on-device AI for cameras, today announced an $8.7M Series A funding round, led by new investors Partech and OTB Ventures, with support from Acclimate Ventures and existing investors. This brings the total funding received to over $17M. The new funding will drive Plumerai’s ambition to enable trillions of intelligent devices, a future that is now accelerated by its new Vision LLM features.

Plumerai’s Tiny AI is already running on millions of cameras, and includes People, Vehicle, Animal & Package Detection, Familiar Face & Stranger Identification, and Multi-Camera People Tracking. It’s faster, cheaper, private, runs on battery-powered cameras, and uses inexpensive and off-the-shelf chips. With an initial focus on home security cameras – where it has won major customers such as Chamberlain Group, whose brands include myQ and LiftMaster and whose products are found in 50+ million homes – it is now rapidly expanding into Enterprise Security, Retail, and more.

In addition to the funding, Plumerai announced today its first Vision LLM-powered features:

- AI Video Search helps users search for anything in their camera’s video history, e.g. ‘person peering through car window’.

- AI Captions accurately describe what actions took place in a video, enabling cameras to send rich notifications to their owners such as ‘A delivery driver rang your doorbell, placed the package on the ground, and left again’.

These features unlock valuable new applications for Plumerai’s customers, and are driven by the recent advances in multimodal LLMs that make it possible to draw deep insights from videos. It is Plumerai’s unique approach of combining its Tiny AI, which runs on the edge, with its cloud-based Vision LLM, that together enable unmatched accuracy with record low cloud inference costs. Benchmarks performed by customers show Plumerai’s AI Video Search to be more accurate than cloud-only solutions such as Amazon Nova and Google Gemini, and to have cloud costs up to 135x lower.

“We are at an exciting point in time where powerful Vision LLMs are now both accurate and cost efficient enough to open up valuable new use cases, thanks to our combination of on-device Tiny AI with cloud-based Vision LLMs,” says Roeland Nusselder, Founder and CEO at Plumerai. “We’re going to make it possible for everyone to have their own AI security guard and assistant that never gets distracted, so they feel safer and calmer than ever before. It will warn you about a stranger in your backyard, a leaking water pipe, or simply help you find your keys. And it’s not just for the home, but for retail, offices, warehouses, elderly care and more. The trust of our partners and investors allows us to bring powerful AI into the physical world, beyond desktops and the cloud.”

“Integrating Plumerai’s Tiny AI into our smart camera lineup has allowed us to offer advanced AI features on affordable, low-power devices. This enables us to provide our customers with smarter, more efficient products that deliver high performance,” said Jishnu Kinwar, VP AI & Innovation at Chamberlain Group.

Reza Malekzadeh, General Partner at Partech, says: “There are many companies building great products but they struggle to add powerful AI features to their devices. Plumerai enables them to do this, move faster to market, with high quality AI features, and lower development costs. They are on track towards a future where trillions of intelligent edge devices are equipped with their AI.”

“We are particularly impressed by the strength of Plumerai’s technology and product, which has clearly outperformed in every technical evaluation performed by customers,” adds Marcin Hejka, Managing Partner at OTB Ventures. “Since customers can use simple over-the-air software updates to activate Plumerai’s AI on devices that are already in the field, Plumerai has been able to scale up quickly with rapid ARR growth as a result. We look forward to working with Roeland and the entire team on this next stage of growth.”

About Plumerai

Plumerai is a pioneering leader in AI solutions for cameras, specializing in highly accurate and efficient Tiny AI for smart devices. As the market leader in licensable Tiny AI for home security cameras, Plumerai powers millions of devices worldwide and is rapidly expanding into enterprise security, smart retail, and more. Its comprehensive AI suite includes People, Vehicle, Animal & Package Detection, Familiar Face & Stranger Identification, Multi-Camera People Tracking, AI Video Search, and AI Captions, all designed to run locally on nearly any camera chip. Headquartered in London, with an office in Amsterdam, Plumerai enables developers to add sophisticated AI capabilities to embedded devices, while prioritizing efficiency and user privacy.

About Partech

Partech is a global tech investment firm headquartered in Paris, with offices in Berlin, Dakar, Dubai, Nairobi, and San Francisco. Partech brings together capital, operational experience, and strategic support to back entrepreneurs from seed to growth stage. Born in San Francisco 40 years ago, today Partech manages €2.7B AUM and a current portfolio of 220 companies, spread across 40 countries and 4 continents.

About OTB Ventures

OTB Ventures is a pan-European deep tech VC fund specializing in Series A and late seed rounds. Its focus lies in supporting startups that pioneer unique technologies across three key verticals: Enterprise AI and Data, SpaceTech and Physical AI, and Novel Computing. Established in 2017, OTB Ventures currently manages $350 million and has offices in Amsterdam, Warsaw, Luxembourg.

Contact

Contact us for more information.

Wednesday, April 2, 2025

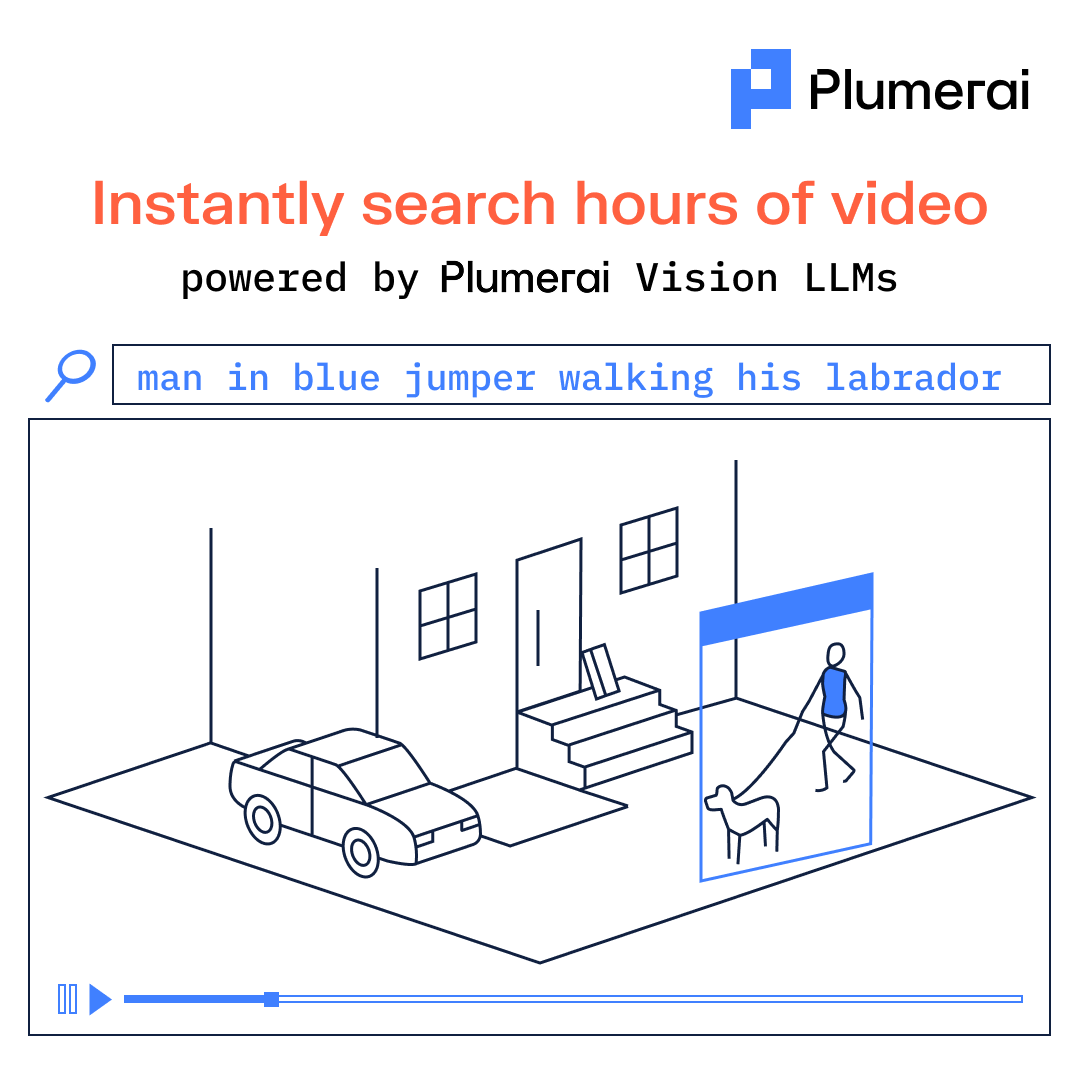

Instantly search hours of video

Smart home cameras capture hours of footage, but who has time to sift through it all?

With Plumerai AI Video Search, you can search for anything:

“Kid on a scooter wearing a red helmet” or “Man in a blue jumper walking a labrador”

Just type it. Get results instantly.

Powered by Plumerai’s Vision LLM, it’s so efficient, it can even run entirely on the camera itself - no cloud needed, zero compute cost.

Tuesday, March 25, 2025

Fast & accurate Familiar Face Identification, even from a distance

Run Plumerai’s Familiar Face Identification on your video doorbells to deliver fast, accurate detection of friends and family. Enable your users to know when a loved one arrives home, or to turn off alerts for familiar faces to minimize unnecessary interruptions; it even detects them from a distance. Best of all, since all the AI runs inside the camera, privacy is respected and there’s no cloud compute cost.

![]()

Tuesday, February 25, 2025

The complete tiny AI solution for smarter homes

Plumerai’s complete tiny AI solution powers advanced features, including Familiar Face Identification and AI Video Search, directly on your smart home cameras and video doorbells. It’s the most accurate and compute-efficient solution on the market, all while running entirely on the edge, eliminating the need for costly cloud compute. Give your users the ability to enjoy precise and reliable notifications without compromising their privacy.

See our Smart Home Cameras page for more information and demos.

Tuesday, December 10, 2024

Meet Plumerai at CES 2025

CES 2025 is just around the corner! We’ll be presenting our innovations in AI video search and multi-camera re-identification, and discussing the future of smart home cameras.

Are you developing cameras? If so, send a message to [email protected] to book a meeting at our private suite in The Venetian.

Thursday, December 5, 2024

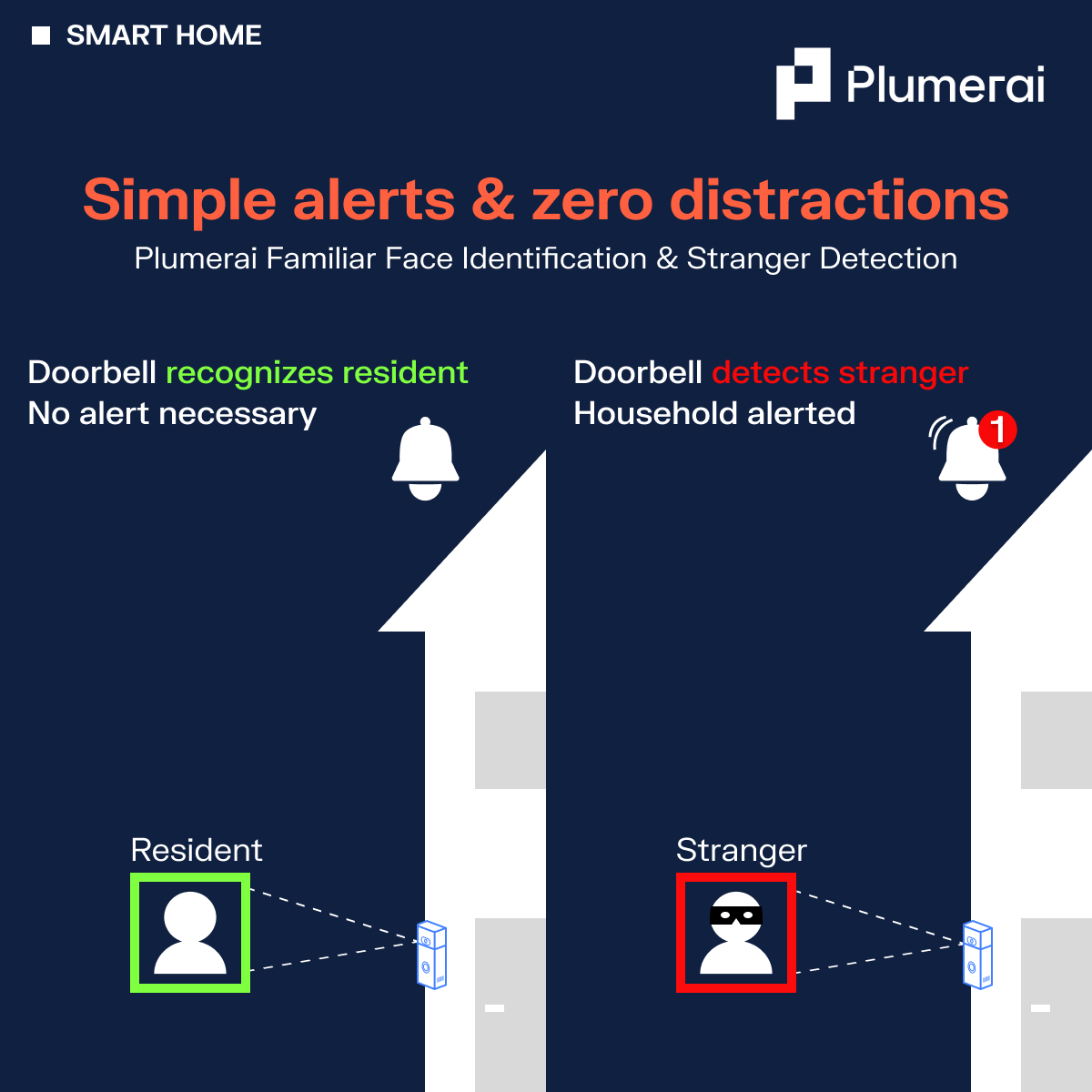

Simple alerts & zero distractions

Our advanced AI software transforms your cameras into smarter, more user-friendly devices that deliver meaningful notifications tailored to your users’ needs:

Plumerai Familiar Face Identification: Empowers users to silence alerts for household members, minimizing unnecessary interruptions to their day.

Plumerai Stranger Detection: Ensures users are notified when it matters most, offering enhanced security and peace of mind.

This innovative technology filters out the noise often created by the smart home ecosystem, creating an engaging and streamlined user experience that puts the user in control. Try out Plumerai Familiar Face Identification in your browser here!

Friday, November 22, 2024

The complete on-device AI solution for smarter home cameras

Plumerai offers a complete AI solution for cameras and video doorbells, packed with advanced functionalities, from Familiar Face Identification and AI Video Search, to recognizing people, vehicles, packages, and animals!

Our tiny AI runs directly inside the camera, ensuring top-notch privacy while eliminating the need for costly cloud compute. It’s the most accurate and compute-efficient solution on the market, delivering precise notifications your users can trust. From identifying unexpected visitors to capturing animals in the garden, Plumerai ensures no important moment goes unnoticed.

See our Smart Home Cameras page for more information.

Tuesday, October 22, 2024

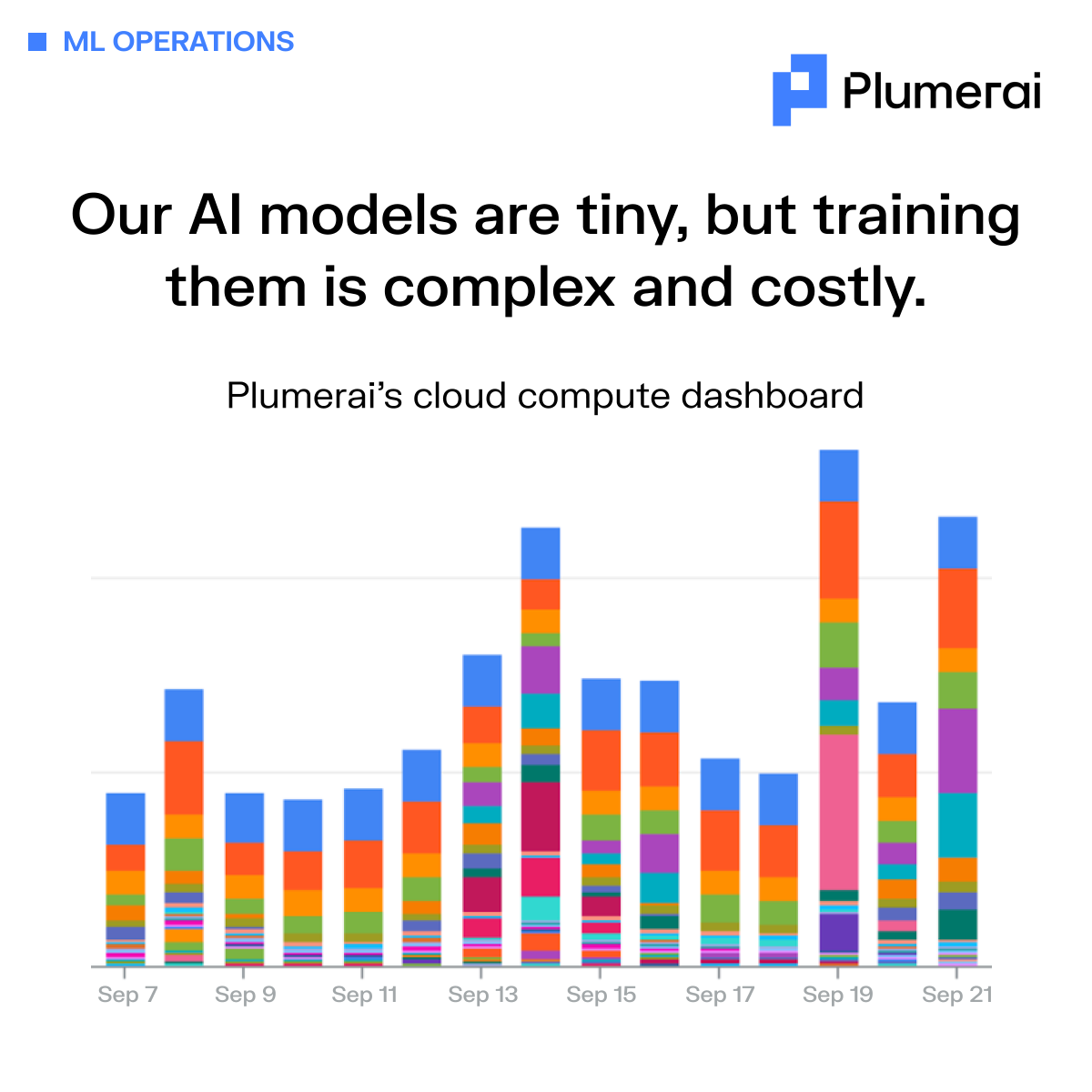

Our AI models are tiny, but training them is complex and costly

This chart captures the intensity of our cloud compute usage over two weeks in September. Each colored bar serves as a reminder of the complexity and cost required to build truly intelligent camera systems.

Our AI may be tiny, but our AI factory is anything but simple. Every day, it handles thousands of tasks—training models on over 30 million images and videos, optimizing for different platforms, and rigorously fine-tuning, testing, and validating each one. All of this happens in the data center, where costs quickly skyrocket when pushing the state-of-the-art in AI.

We handle the heavy lifting of AI development, so you can focus on what matters most—delivering the best product to your customers. We operate the AI factory so you can enjoy cutting-edge technology, without the hassle and cost of managing it yourself.

Want to see results? Try our online demo.

Monday, October 7, 2024

iPod Inventor and Nest Founder Tony Fadell Backs Plumerai’s Tiny AI, Hailing It as a Massive Market Disruption Democratizing AI Technology Starting with Smart Home Cameras

Plumerai Announces Partnership with Chamberlain Group, the Largest Manufacturer of Automated Access Solutions found in 50+ million homes

Press release

London – October 7, 2024 - Plumerai™, a pioneer in on-device AI solutions since 2018, today announced a major partnership with Chamberlain Group, whose brands include myQ and LiftMaster, marking a significant milestone in the adoption of its Tiny AI technology.

To achieve features like People Detection and Familiar Face Identification, cloud-based AI and, in particular, Large Language Models (LLMs) require vast remote data centers, consume increasing amounts of energy, pose privacy risks, and incur rising costs. Plumerai’s Tiny AI can do all this on the device itself, is cost-effective, chip agnostic, capable of operating on battery-powered devices, doesn’t clog up your bandwidth with huge video uploads, and has minuscule energy requirements. Moreover, it boasts the most accurate on-device Tiny AI on the market and offers end-to-end encryption. Already running locally on millions of smart home cameras, Plumerai’s Tiny AI is making communities safer and lives more convenient, while proving that in AI, smaller can indeed be smarter. Plumerai has gained strong backing from early investor Tony Fadell, Principal at Build Collective along with Dr. Hermann Hauser KBE, Founder of Arm, and LocalGlobe.

“Tiny AI is a paradigm shift in how we approach artificial intelligence,” said Roeland Nusselder, Co-founder and CEO at Plumerai. “This approach allows us to embed powerful AI capabilities directly into smart home devices, enhancing security and privacy in ways that were once considered unfeasible. It’s not about making AI bigger - it’s about making it smarter, more accessible, and more aligned with people’s real-world needs."

Key Features of Plumerai’s Tiny AI on the Edge, No Cloud Required

- Person, Pet, Vehicle, and Package Detection notifications in .7 seconds vs. average smart cam 2-10 seconds. This may sound like an incremental improvement, but anyone who has a smart cam will appreciate this is a game-changer.

- Familiar Face Identification and Stranger Detection deliver accurate notifications while protecting privacy; tag up to 40 “safe” i.e. familiar individuals.

- Multi-Cam Person Tracking (first of its kind!) for comprehensive surveillance.

- Advanced Motion Detection with rapid response time of, on average, .5 seconds which greatly extends battery life.

- Over-the-Air updates for products in the field means faster Go-to-Market.

Plumerai has built the most accurate Tiny AI solution for smart home cameras, trained with over 30 million images and videos. Consistently outperforming competitors in every commercial shootout, Plumerai is the undisputed leader in both accuracy and compute efficiency. Internal test comparisons against a leading smart camera, widely regarded as the most accurate among established smart home players, revealed striking results: leading smart camera’s AI incorrectly identified strangers as household members in 2% of the recorded events, while Plumerai’s Familiar Face Identification made no incorrect identifications.

“Plumerai’s technology gives companies a significant edge in a competitive market, proving that efficient, on-device AI is the future of smart home security. It’s a perfect showcase for Tiny AI’s strengths - privacy, real-time processing, and energy efficiency. I’m excited by Plumerai’s potential to expand into other IoT verticals and redefine edge computing,” said Dr. Hermann Hauser KBE, Founder of Arm.

Providing a ready-to-integrate end-to-end solution for AI on the edge, Plumerai is making AI more competitive and a reality for more companies. This breakthrough provides consumers with more options outside the realm of big tech giants like Google, Amazon, Microsoft, and Apple. Unlike other battery powered “smart” cams that send their video feed to the cloud over power-hungry Wi-Fi, Plumerai enables the use of low-power mesh networks to send notifications. With Plumerai, companies can easily change their chips without losing AI features.

“Integrating Plumerai’s Tiny AI into our smart camera lineup has allowed us to offer advanced AI features on affordable, low-power devices. This enables us to provide our customers with smarter, more efficient products that deliver high performance without the need for cloud processing,” said Jishnu Kinwar, VP Advanced Products at Chamberlain Group.

Notably, 11+ million people rely on Chamberlain’s myQ® app daily to access and monitor their homes, communities and businesses, from anywhere.

“Plumerai’s Tiny AI isn’t just an incremental advance – it’s a massive market disruption,” said Tony Fadell, Nest Founder and Build Collective Principal. “While Large Language Models capture headlines, Plumerai is getting commercial traction with its new smaller-is-smarter AI model. Their Tiny AI is faster, cheaper, more accurate, and doesn’t require armies of developers harnessing vast data centers.”

About Plumerai

Plumerai is a pioneering leader in embedded AI solutions, specializing in highly accurate and efficient Tiny AI for smart devices. As the market leader in licensable Tiny AI for smart home cameras, Plumerai powers millions of devices worldwide and is rapidly expanding into video conferencing, smart retail, security, and IoT sectors. Their comprehensive AI suite includes People Detection, Vehicle detection, Animal detection, Familiar Face Identification, and Multi-Cam Person Tacking, all designed to run locally on nearly any camera chip. Headquartered in London and Amsterdam and backed by Tony Fadell, Principal at Build Collective, Dr. Hermann Hauser KBE, Founder of Arm, and LocalGlobe. Plumerai enables developers to add sophisticated AI capabilities to embedded devices, revolutionizing smart technology while prioritizing efficiency and user privacy.

About Build Collective

Build Collective, led by Tony Fadell, is a global investment and advisory firm coaching engineers, scientists, and entrepreneurs working on foundational deep technology. With 200+ startups in its portfolio, Build Collective invests its money and time to help engineers and scientists bring technology out of the lab and into our lives. Supporting companies beyond Silicon Valley, Build Collective’s portfolio is based mainly in the EU and US with some companies in Asia and the Middle East. From tackling food security, sustainability, transportation, energy efficiency, weather, robotics, and disease to empowering small business owners, entrepreneurs, and consumers, the startups in Build Collective’s portfolio are improving our lives and prospects for the future. With no LP’s to report to, the Build Collective team is hands-on and advises for the long-term.

About Chamberlain Group

Chamberlain Group is a global leader in intelligent access and Blackstone portfolio company. Our innovative products, combined with intuitive software solutions, comprise a myQ ecosystem that delivers seamless, secure access to people’s homes and businesses. CG’s recognizable brands, including LiftMaster® and Chamberlain®, are found in 50+ million homes, and 11+ million people rely on our myQ® app daily to control and monitor their homes, communities and businesses, from anywhere. Our patented vehicle-to-home connectivity solution, myQ Connected Garage, is available in millions of vehicles from the leading automakers.

Media Contact:

Elise Houren

[email protected]

Thursday, October 3, 2024

Automatic Enrollment for Plumerai Familiar Face Identification!

We have launched Automatic Enrollment for our Plumerai Familiar Face Identification! Automatic Enrollment makes it effortless to register new individuals for identification; as soon as a person walks up to the camera, they will be automatically registered, and users can tag them with a name in the app.

This feature:

- identifies people from an 8m/26ft distance (often beyond),

- excels with a variety of camera positions, camera angles, and in low light conditions,

- and most importantly, it has been built to run on the device (not in the cloud!), so it doesn’t compromise on privacy.

Plumerai Familiar Face Identification is now operational on millions of cameras and we are proud to share that it is the most accurate solution for the smart home (outperforming Google Nest!). Offering this new feature really takes it to the next level.

Try our demo for yourself here and get in touch if you would like to utilize this incredible feature in your cameras.

Tuesday, September 24, 2024

High accuracy, even in the dark

Plumerai Familiar Face Identification enables smart home cameras to notify you when loved ones arrive safely, activate floodlights if a stranger loiters in your yard, and quickly search through recordings. It’s also a critical building block for the vision LLM features that we’re developing.

As shown in the video, it performs exceptionally well even in complete darkness, accurately and effortlessly identifying people. It works perfectly from many camera positions and with wide-angle lenses.

Our Plumerai Familiar Face Identification is already operational on millions of cameras. It’s the most accurate solution for the smart home (outperforming Google Nest!) and has been winning every commercial shootout.

Give our demo a try in your browser here!

Tuesday, August 27, 2024

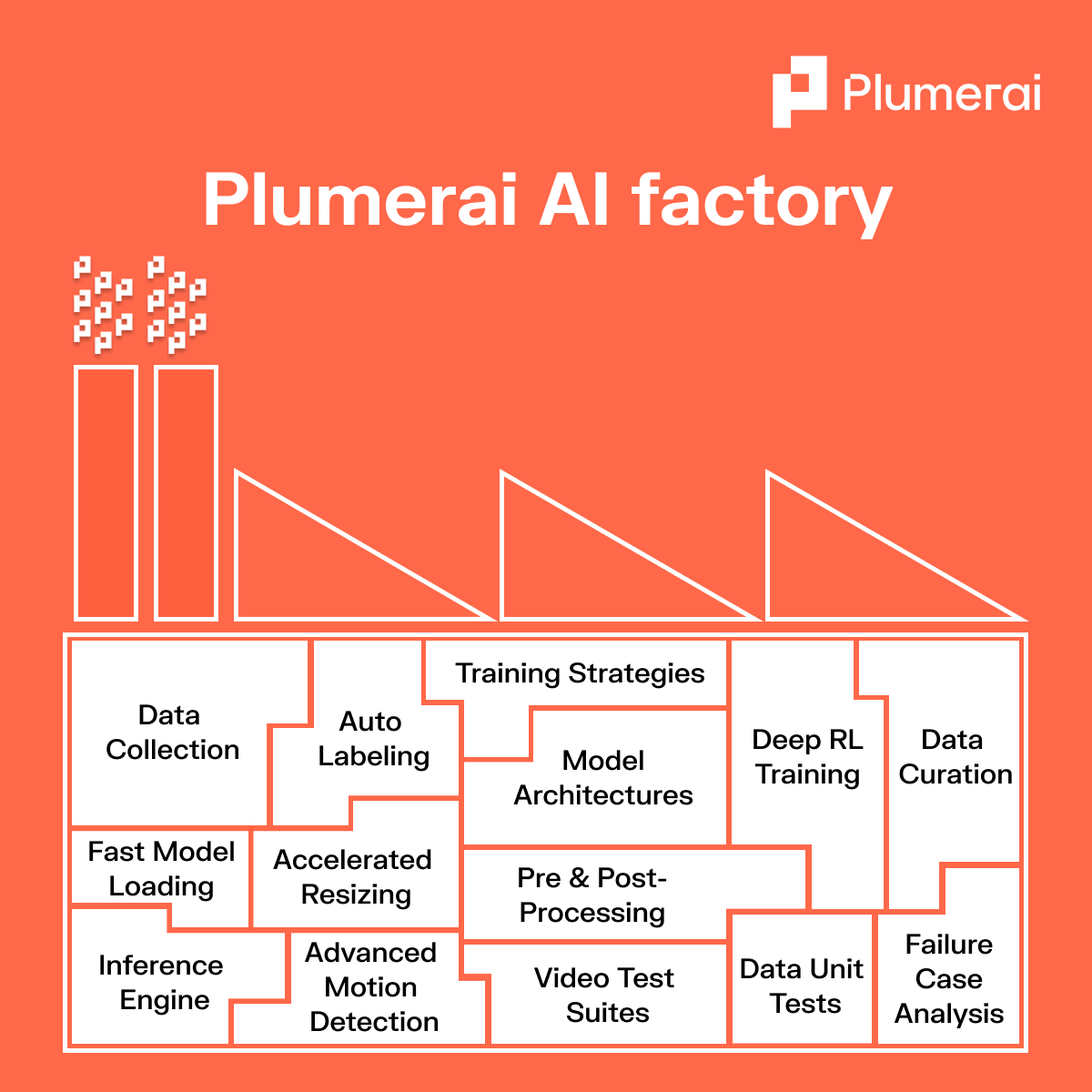

Plumerai AI Factory

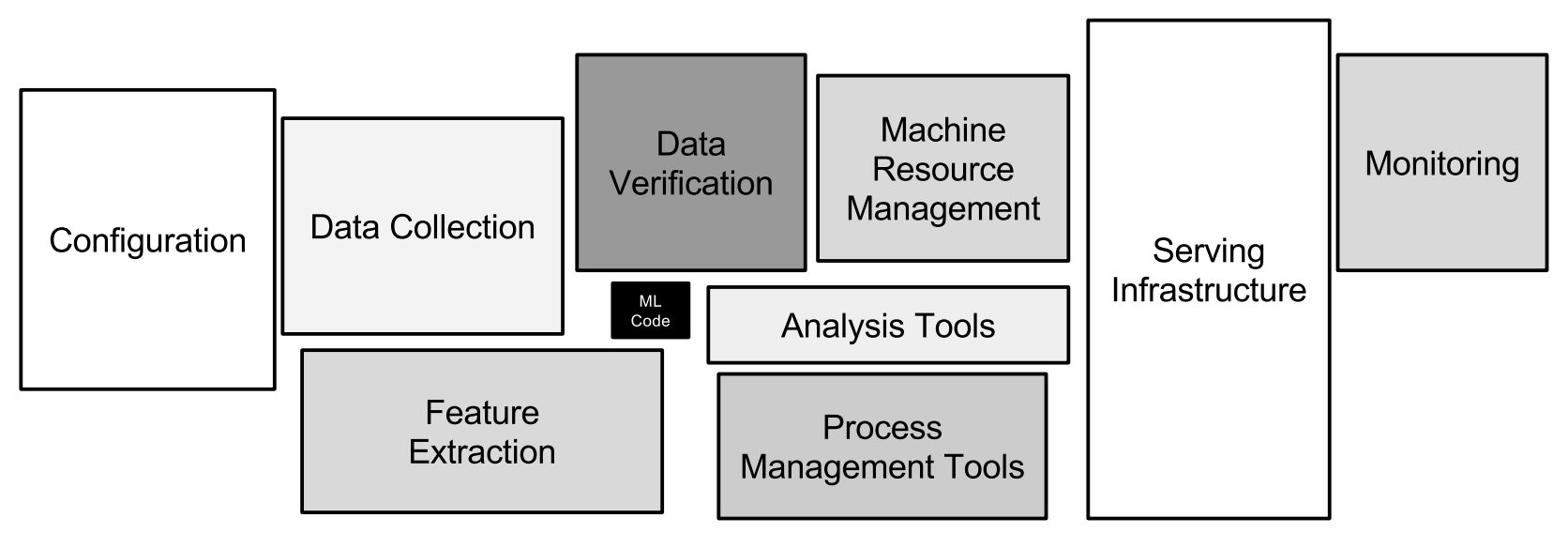

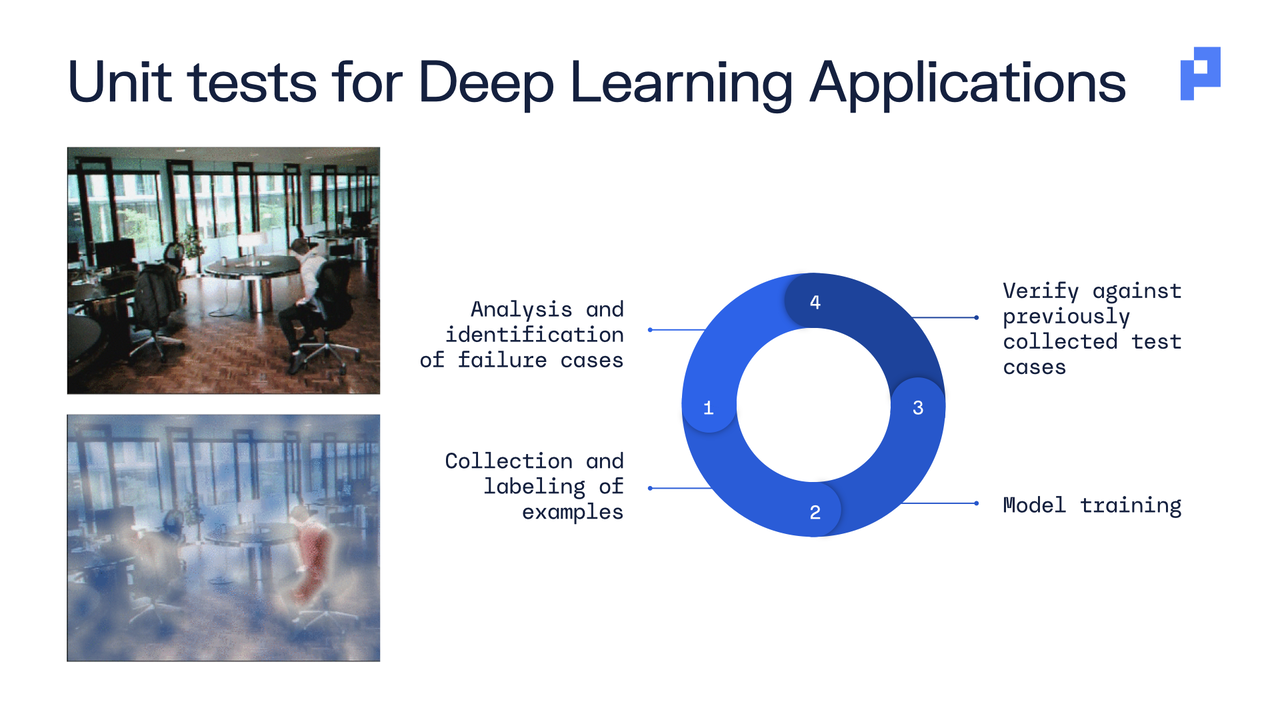

Developing accurate and efficient AI solutions involves a complex process where numerous tasks must work seamlessly together. We refer to this as our Plumerai AI factory.

Our AI factory is built on a foundation of carefully integrated components, each playing a crucial role in delivering accurate and robust AI solutions.

From data collection and labeling to model architecture and deployment, every step is meticulously designed to ensure optimal performance and accuracy. We continuously refine our algorithms and models, apply advanced training strategies, and conduct rigorous data unit tests to identify and mitigate potential failure cases.

This holistic approach enables us to deliver industry-leading AI technology that enhances the smart home experience, providing safety, convenience, and peace of mind for the end users.

Friday, July 26, 2024

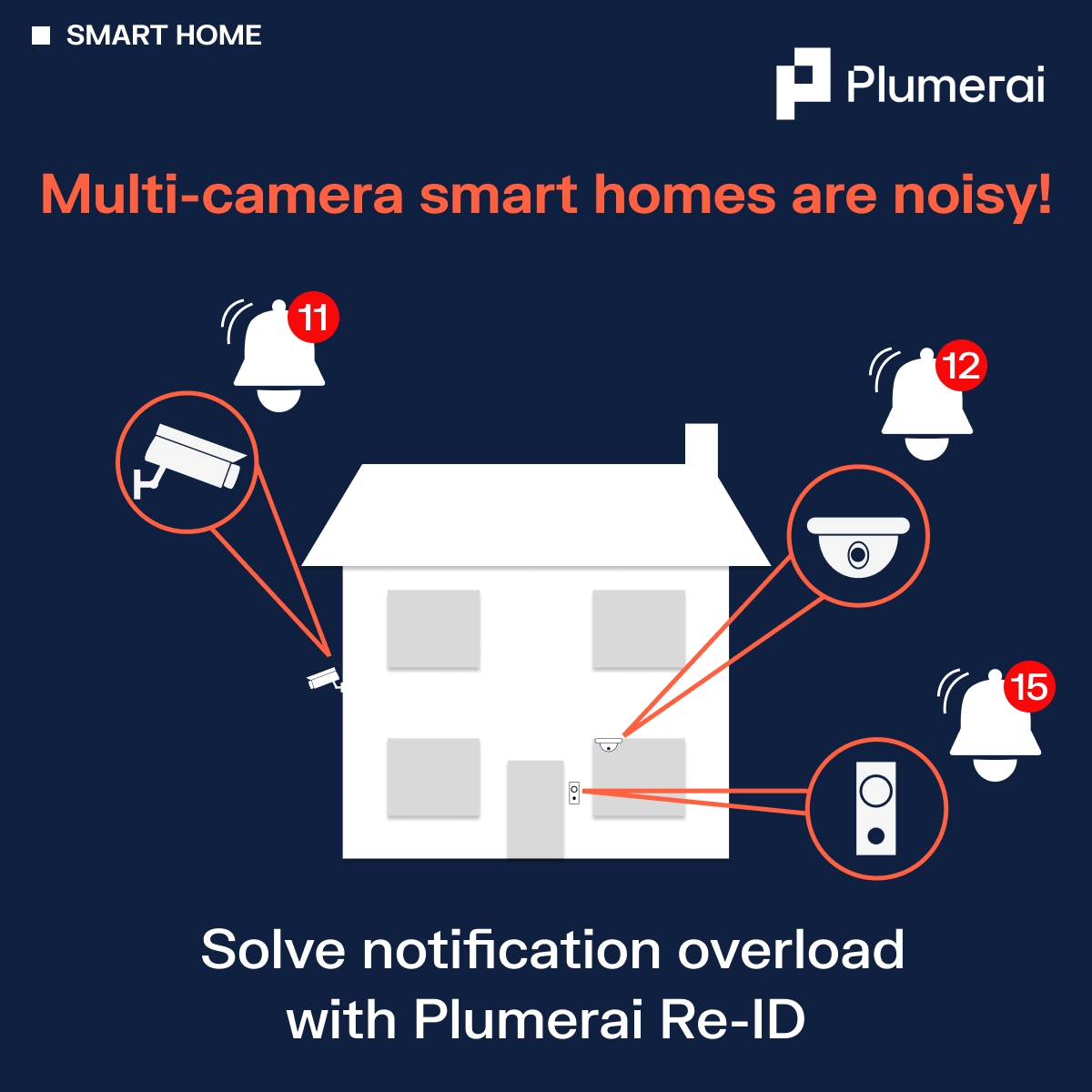

Multi-camera smart homes are noisy!

Solve notification overload with Plumerai Re-ID.

Multi-camera smart homes enhance security, but more cameras mean more notifications. Imagine Emma arriving home to the house pictured below and walking through her home to the garden. Her household would receive three notifications in quick succession from the video doorbell, indoor camera, and outdoor camera. That’s not a good user experience!

At Plumerai, we solve this with our multi-camera Re-Identification AI, which recognizes individuals by their clothes and physique. It tracks the same person as they move around the home, reducing unnecessary alerts. When combined with our Familiar Face Identification, the notification can become even more specific. So, when Emma arrives home and moves through the house to the garden, users receive just one useful notification:

‘Emma arrived home.’

Thursday, July 18, 2024

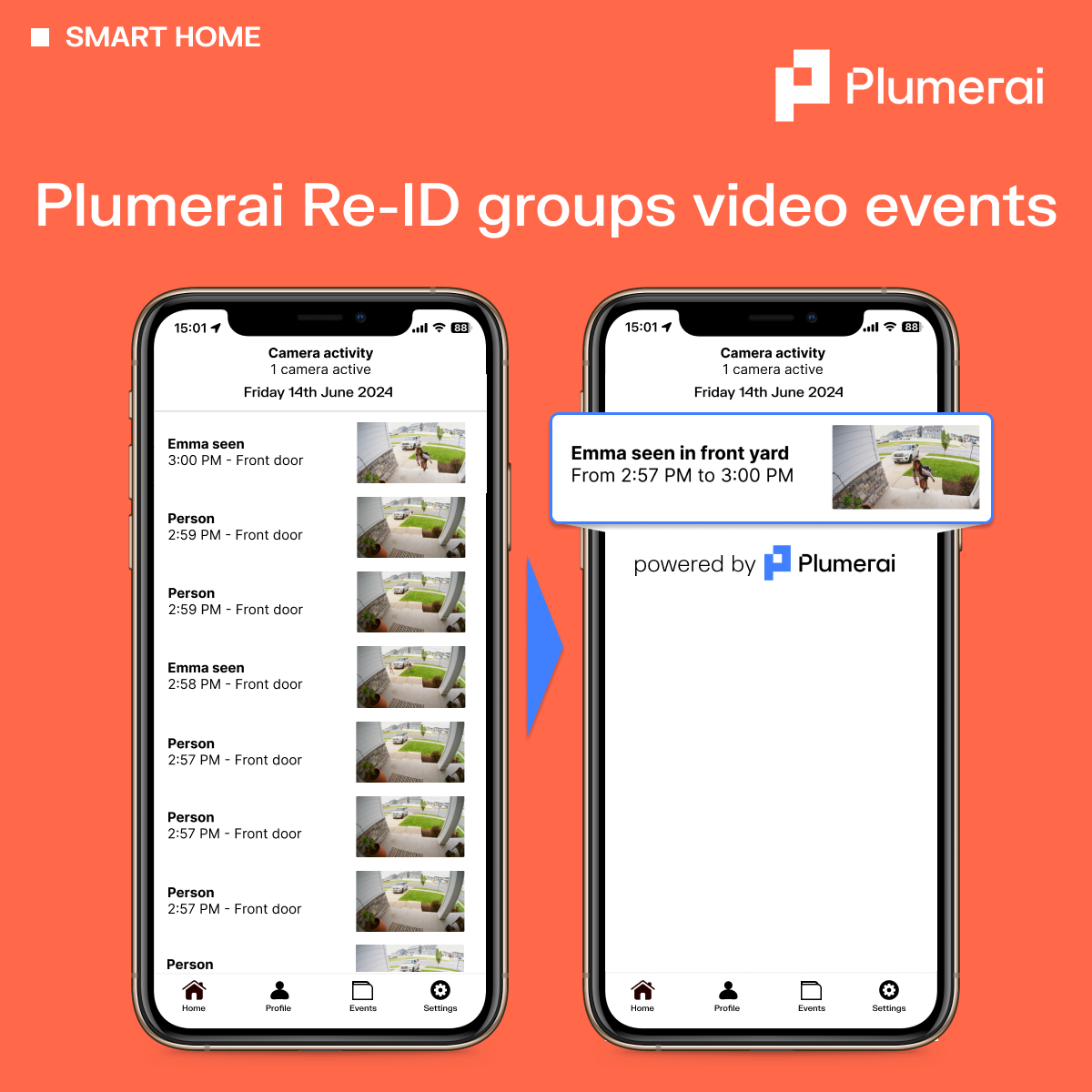

Plumerai Re-ID groups video events

With Plumerai’s Re-identification AI embedded in your smart home cameras, they can remember and recognize a person’s clothing and physique. So, you can seamlessly merge video clips of the same individual into a single, comprehensive video. The result is a simplified, uncluttered activity feed, allowing users to quickly locate and view the video clips they’re interested in, without having to open multiple short clips.

Integrated with Plumerai’s Familiar Face Identification & Stranger Detection, the system also identifies known and unknown individuals. In the example below, users will receive just one, concise video of ‘Emma’ playing outside, instead of dozens of short videos.

Tuesday, July 9, 2024

Plumerai Package Detection AI

Integrate Plumerai Package Detection AI into your video doorbells and give your customers the knowledge of when and where their packages have been delivered. Our AI detects a wide variety of packages, their location, and drop off or pick up. Plumerai Package Detection is delivered as part of our full smart home camera AI solution.

Wednesday, June 26, 2024

Simply brilliant alerts with Plumerai Re-ID

Plumerai’s breakthrough Person Re-identification AI remembers and recognizes a person’s clothing and physique, enabling it to understand when the same person is going in and out of view. Combined with Plumerai’s Familiar Face & Stranger Identification, it can also recognize known and unknown individuals. So if Emma is gardening or taking out the garbage, household members receive a single, insightful alert rather than a flood of unhelpful notifications.

Thursday, June 20, 2024

Fisheye lens? No problem!

Fisheye lenses capture a much wider angle, so users can see a clear view of their porch. However, the fisheye distortion often makes accurate familiar face identification difficult. Plumerai’s AI has been designed to work with a wide variety of fields-of-view, enabling high detection accuracy even on wide angle lenses!

Tuesday, June 11, 2024

Plumerai Package Detection AI

Integrate Plumerai’s new Package Detection AI for video doorbells, so your customers know when packages are delivered to their home. Send snapshots of each package delivery or pickup to give them extra peace of mind.

Plumerai Package Detection AI integrates seamlessly with Plumerai People, Animal, Vehicle, and Stranger Detection, as well as Plumerai Familiar Face Identification.

Thursday, June 6, 2024

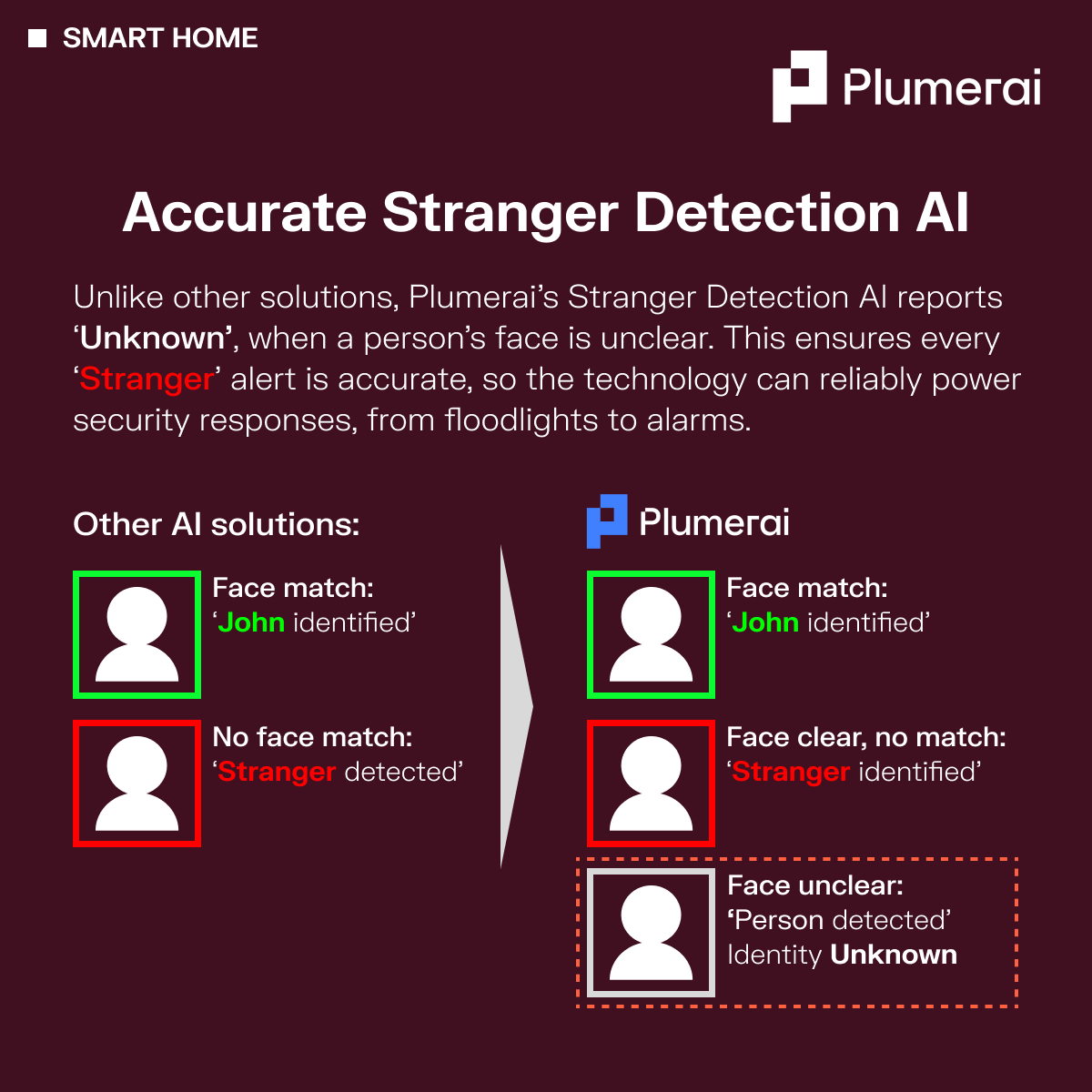

Accurate Stranger Detection AI

Plumerai is enhancing stranger detection! 🚀

Unlike other systems that flag ‘Stranger’ when no face match is found, our AI goes a step further. Plumerai’s technology can distinguish between unclear or distant faces and actual strangers. When the face is unclear, our algorithm labels it as ‘Identity Unknown’ rather than jumping to conclusions. As a result, you can rely our technology to power accurate notifications and security responses, such as turning on floodlights at night and activating alarms when you’re not at home.

Wednesday, April 17, 2024

Intelligent floodlights with Plumerai Stranger Detection AI

We’re taking smart home cameras to the next level with Plumerai’s Stranger Detection AI! Our technology accurately distinguishes between familiar faces and strangers, allowing your customers to tailor their alerts and security response. For an advanced smart home experience, link the doorbell to the home security system: welcome loved ones with warm lighting, and deter unwelcome visitors with red floodlights and an alarm.

Wednesday, March 20, 2024

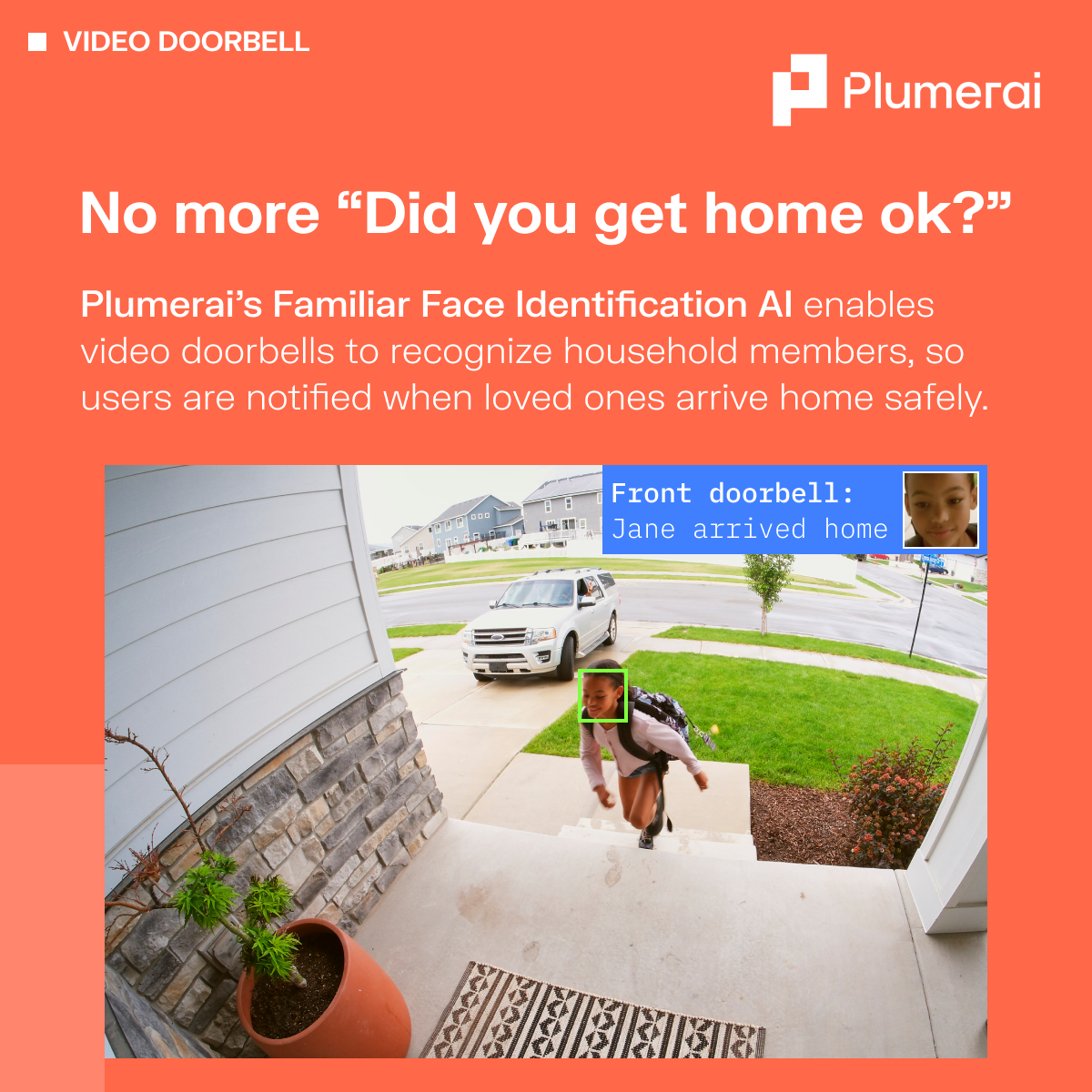

No more "Did you get home ok?"

Offer peace of mind to your customers by deploying Plumerai’s Familiar Face Identification AI to your video doorbell. Our technology recognizes familiar faces, offering personalized alerts like “Jane arrived home”. The best part? Plumerai AI models run on the edge, so your customers’ images don’t leave the device, ensuring their privacy.

Try out our browser-based webcam demo here: plumerai.com/automatic-face-identification-live

Friday, December 15, 2023

Plumerai’s highly accurate People Detection and Familiar Face Identification AI are now available on the Renesas RA8D1 MCU

Discover the power of our highly efficient, on-device AI software, now available on the new Renesas RA8D1 MCU through our partnership with Renesas. In the video below, Plumerai’s Head of Product Marketing, Marco, showcases practical applications in the Smart Home and for IOT, and demonstrates our People Detection AI and Familiar Face Identification AI models.

The Plumerai People Detection AI fits on almost any camera with its tiny footprint of 1.5MB. It outperforms much larger models on accuracy, and achieves 13.6 frames per second on the RA8’s Arm Cortex-M85. Familiar Face Identification is a more complex task and requires multiple neural networks running in parallel, but still runs at 4 frames per second on the Arm Cortex-M85, providing rapid identifications.

All Plumerai models are trained on our diverse dataset of over 30 million images; our proprietary data tooling ensures that only good-quality and highly relevant data is utilized. Our models are proven in the field, and you can try them for yourself in your web browser:

Friday, November 24, 2023

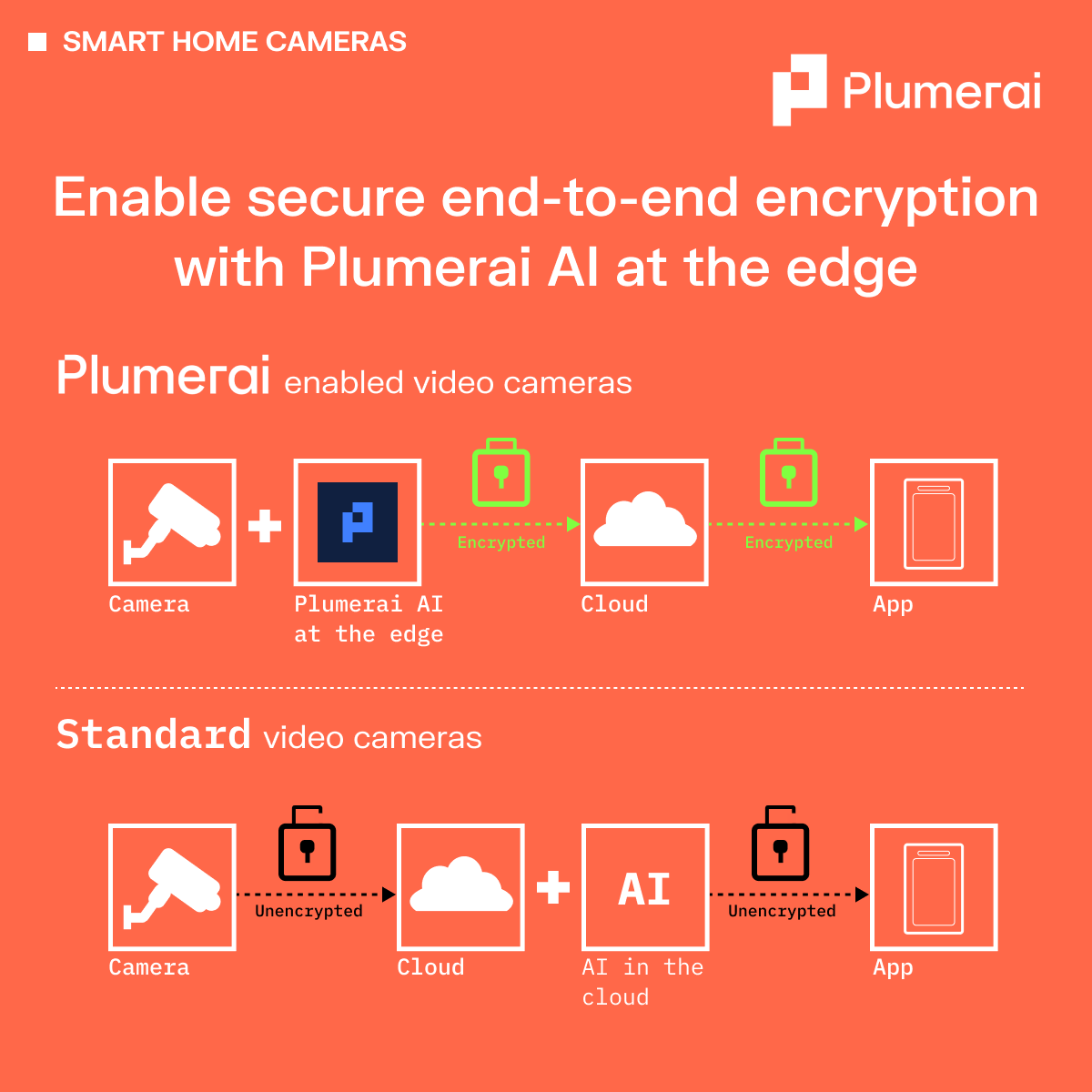

Enable secure end-to-end encryption with Plumerai AI at the edge

To offer AI-powered alerts and recordings, smart home cameras typically send unencrypted footage to the cloud for AI analysis. This is because if the video is encrypted, the AI can’t analyze it; however, sending unencrypted data to the cloud poses risks for privacy and cyber attacks. With Plumerai, all the AI occurs on the camera, enabling you to offer end-to-end encryption on your smart home cameras, as well as advanced AI features! Plumerai gives you the best of both worlds without compromise.

Thursday, November 9, 2023

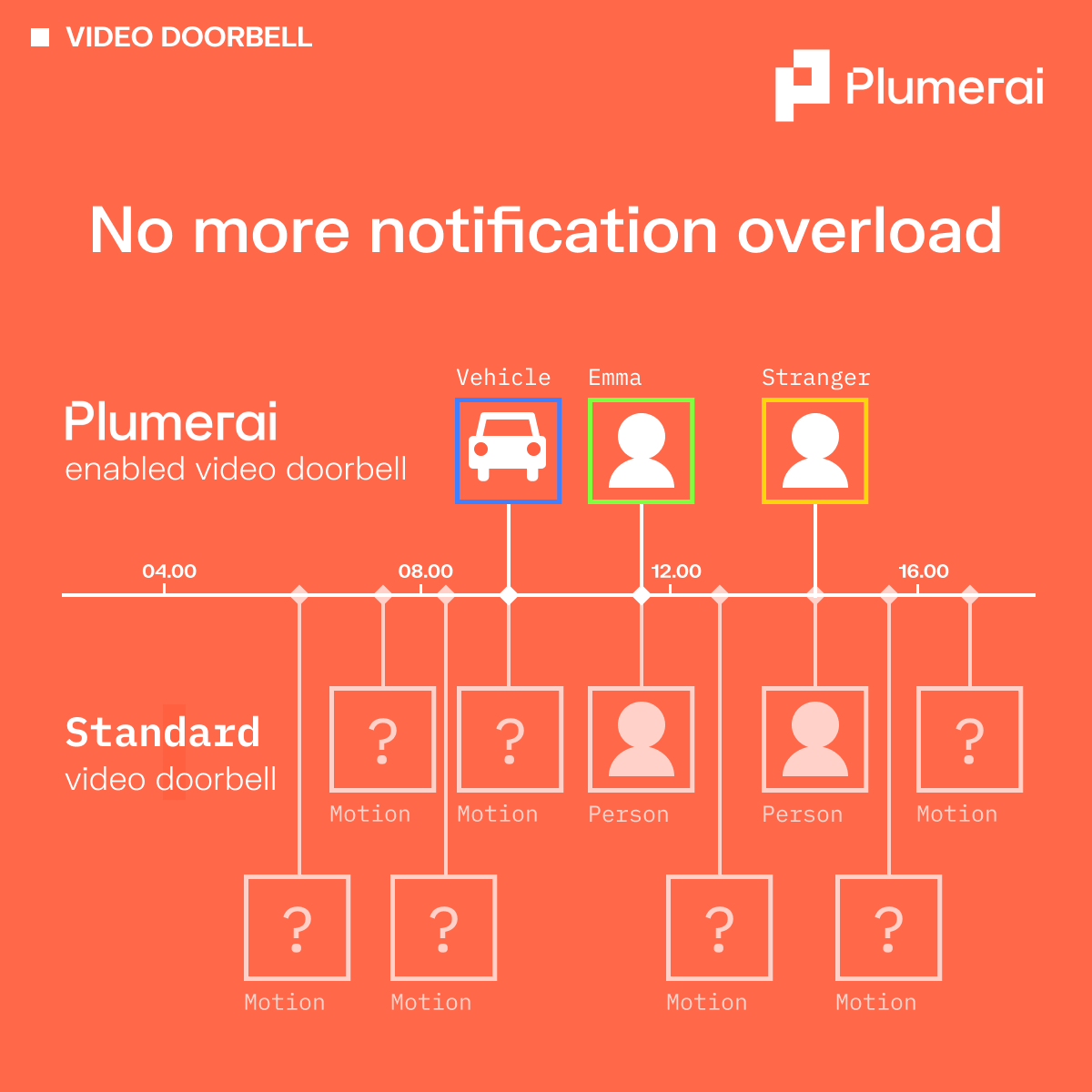

No more notification overload

Say goodbye to irrelevant notifications from your video doorbell. No more notifications from moving branches, shadows, or of yourself coming home. Plumerai’s technology enables your video doorbells to accurately detect people & vehicles and even recognizes household members. So you are only alerted about the events that matter to you.

Try it in your browser now: plumerai.com/automatic-face-identification-live

Thursday, October 26, 2023

Welcoming familiar faces. Keeping watch for strangers.

Upgrade your smart home cameras with Plumerai’s Familiar Face Identification AI for fewer, more relevant notifications. Our AI model runs inside the camera, so images don’t leave the device, protecting your customer’s privacy. Simply deploy our technology to your existing devices via an over-the-air software update.

Try our live AI demo in your browser: plumerai.com/automatic-face-identification-live

Monday, October 2, 2023

Arm Tech Talk: Accelerating People Detection with Arm Helium vector extensions

Watch Cedric Nugteren showcase Plumerai’s People Detection on an Arm Cortex-M85 with Helium vector extensions, running at a blazing 13 FPS with a 3.7x speed-up over Cortex-M7.

Cedric delves deep into Helium MVE, providing a comprehensive comparison to the traditional Cortex-M instruction set. He also demonstrates Helium code for 8-bit integer matrix multiplications, the core of deep learning models.

He shows a live demo on a Renesas board featuring an Arm Cortex-M85, along with a preview of the Arm Ethos-U accelerator, ramping up the frame rate even further to 83 FPS. Additionally, he showcases various other Helium-accelerated AI applications developed by Plumerai.

Monday, July 17, 2023

tinyML EMEA 2023: Familiar Face Identification

Imagine a TV that shows tailored recommendations and adjusts the volume for each viewer, or a video doorbell that notifies you when a stranger is at the door. A coffee machine that knows exactly what you want so you only have to confirm. A car that adjusts the seat as soon as you get in, because it knows who you are. All of this and more is possible with Familiar Face Identification, a technology that enables devices to recognize their users and personalize their settings accordingly.

Unfortunately, common methods for Familiar Face Identification are either inaccurate or require running large models in the cloud, resulting in high cost and substantial energy consumption. Moreover, the transmission of facial images from edge devices to the cloud entails inherent risks in terms of security and privacy.

At Plumerai, we are on a mission to make AI tiny. We have recently succeeded in bringing Familiar Face Identification to the edge. This makes it possible to identify users entirely locally — and therefore securely, using very little energy and with very low-cost hardware.

Our solution uses an end-to-end deep learning approach that consists of three neural networks: one for object detection, one for face representation, and one for face matching. We have applied various advanced model design, compression, and training techniques to make these networks fit within the hardware constraints of small CPUs, while retaining excellent accuracy.

In his talk at the tinyML EMEA Innovation Forum, Tim de Bruin presented the techniques we used to make our Familiar Face Identification solution small and accurate. By enabling face identification to run entirely on the edge, our solution opens up new possibilities for user-friendly and privacy-preserving applications on tiny devices.

These techniques are explained and demonstrated using our live web demo, which you can try for yourself right in the browser.

Tuesday, June 27, 2023

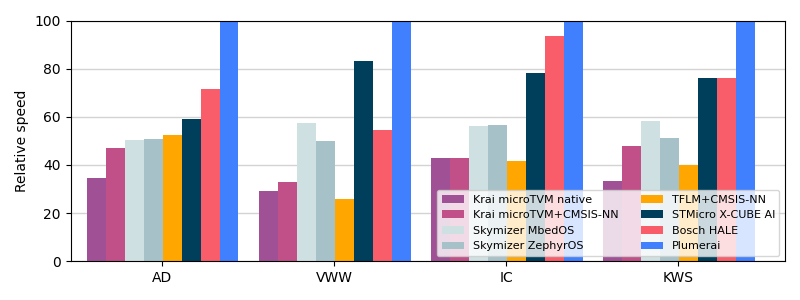

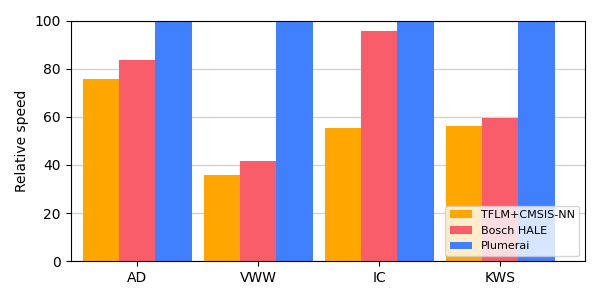

Plumerai wins MLPerf Tiny 1.1 AI benchmark for microcontrollers again

New results show Plumerai leads performance on all Cortex-M platforms, now also on M0/M0+

Last year we presented our MLPerf Tiny 0.7 and MLPerf Tiny 1.0 benchmark scores, showing that our inference engine runs your AI models faster than any other tool. Today, MLPerf released new Tiny 1.1 scores and Plumerai has done it again: we still lead the performance charts. A faster inference engine means that you can run larger and more accurate AI models, go into sleep mode earlier to save power, and/or run AI models on smaller and lower cost hardware.

The Plumerai Inference Engine compiles any AI model into an optimized library and runtime that executes that model on a CPU. Our inference engine executes the AI model as-is: it does no additional quantization, no binarization, no pruning, and no model compression. Therefore, there is no accuracy loss. The AI model simply runs faster than other solutions and has a smaller memory footprint. We license the Plumerai Inference Engine to semiconductor manufacturers such that they can include it in their SDK and make it available to their customers. In addition, we license the Plumerai Inference Engine directly to AI software developers.

Here’s the overview of the MLPerf Tiny 1.1 results for the STM32 Nucleo-L4R5ZI board with an Arm Cortex-M4 CPU running at 120MHz:

For comparison purposes, we also included TensorFlow Lite for Microcontrollers (TFLM) with Arm’s CMSIS-NN in orange, although this is not part of the official MLPerf Tiny results. To be clear: all results in the above graph compare inference engine software-only solutions. The same models run on the same hardware with the same model accuracy.

Since MLPerf Tiny 1.0, we have also improved our inference engine significantly in other areas. We have added new features such as INT16 support (e.g. for audio models), support for more layers, lowered RAM usage further, and added support for more types of microcontrollers.

We recently also extended support for the Arm Cortex-M0/M0+ family. Even though our results for these tiny microcontrollers are not included in MLPerf Tiny 1.1, we did run extra benchmarks with the official MLPerf Tiny software specifically for this blog post and guess what: we beat the competition again! In the following graph we present results on the STM32 Nucleo-G0B1RE board with a 64MHz Arm Cortex-M0+:

As mentioned before, our inference engine also does very well on reducing RAM usage and code size, which are often very important factors for microcontrollers due to their limited on-chip memory sizes. MLPerf Tiny does not measure those metrics, but we do present them here. The following table shows the peak RAM numbers for our inference engine compared to TensorFlow Lite for Microcontrollers (TFLM) with Arm’s CMSIS-NN:

| Memory | Visual Wake Words | Image Classification | Keyword Spotting | Anomaly Detection | Reduction |

|---|---|---|---|---|---|

| 98.80 | 54.10 | 23.90 | 2.60 | ||

| 36.60 | 37.90 | 17.10 | 1.00 | ||

| 2.70x | 1.43x | 1.40x | 2.60x | 2.03x |

And here are our code size results (excluding model weights) compared to TensorFlow Lite for Microcontrollers (TFLM):

| Code size | Visual Wake Words | Image Classification | Keyword Spotting | Anomaly Detection | Reduction |

|---|---|---|---|---|---|

| 187.30 | 93.60 | 104.00 | 42.60 | ||

| 54.00 | 22.50 | 20.20 | 28.40 | ||

| 3.47x | 4.16x | 5.15x | 1.50x | 3.57x |

Want to see how fast your models can run? You can submit them for free on our Plumerai Benchmark service. We compile your model, run it on a microcontroller, and email you the results in minutes. Curious to know more? You can browse our documentation online. Contact us if you want to include the Plumerai Inference Engine in your SDK.

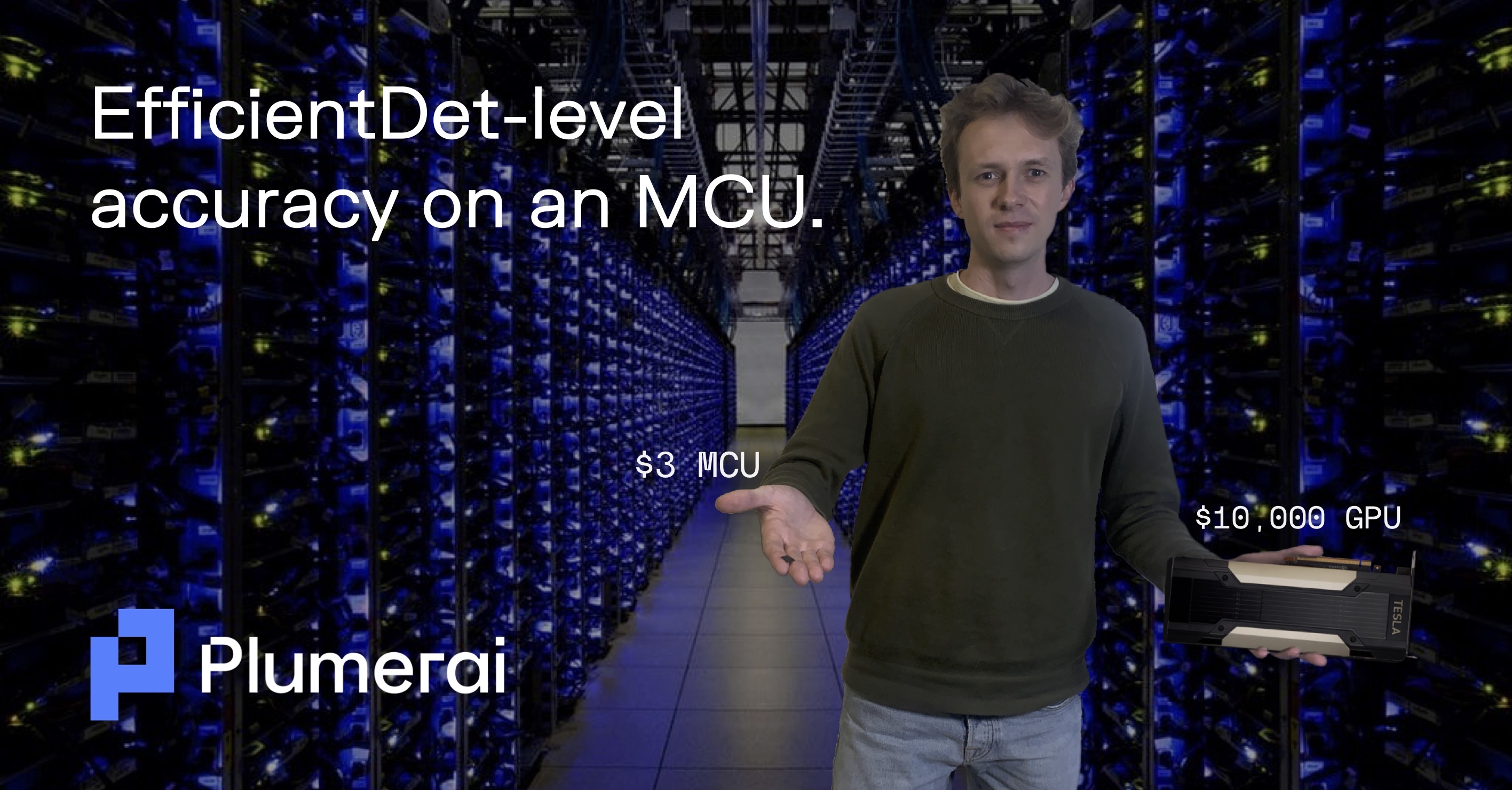

Monday, March 13, 2023

World’s fastest MCU: Renesas runs Plumerai People Detection on Arm Cortex-M85 with Helium

Live demo at the Renesas booth at Embedded World from March 14-16

Plumerai delivers a complete software solution for people detection. Our AI models are fast, highly accurate, and tiny. They even run on microcontrollers. Plumerai People Detection and the Plumerai Inference Engine have now been optimized for the Arm Cortex-M85 also, including extensive optimizations for the Helium vectorized instructions. We worked on this together with Renesas, an industry leader in microcontrollers. Renesas will show our people detection running on an MCU that is based on the Arm Cortex-M85 processor at the Embedded World conference this week.

Microcontrollers are an ideal choice for many applications: they are highly cost-effective and consume very little power, which enables new battery-powered applications. They are small in size, and require minimal external components, such that they can be integrated into small systems.

Plumerai People Detection runs with an incredible 13 fps on the Renesas Helium-enabled Arm Cortex-M85, greatly outperforming any other MCU solution. It speeds up the processing of Plumerai People Detection by roughly 4x compared to an Arm Cortex-M7 at the same frequency. The combination of Plumerai’s efficient software with Renesas’ powerful MCU opens up new applications such as battery-powered AI cameras that can be deployed anywhere.

Faster AI processing is crucial for battery-powered devices because it allows the system to go to sleep sooner and conserve power. Faster processing also results in higher detection accuracy, because the MCU can run larger AI models. Additionally, a high frame rate enables people tracking to help understand when people are loitering, enter specific areas, or to count them.

Renesas has quickly become a leader in the Arm MCU market, offering a feature rich family of over 250 different MCUs. Renesas will implement the new Arm processor within its RA (Renesas Advanced) Family of MCUs.

Contact us for more information about our AI solutions and to receive a demo for evaluation.

Monday, March 6, 2023

Plumerai People Detection AI now runs on Espressif ESP32-S3 MCU

Plumerai People Detection AI is now available on Espressif’s ESP32-S3 microcontroller! Trained with more than 30 million images, Plumerai’s AI detects each person in view, even if partially occluded, and tracks up to 20 people across frames. Running the Plumerai People Detection on Espressif’s MCU enables new smart home, smart building, smart city, and smart health applications. Tiny smart home cameras based on the ESP32-S3 can provide notifications when people are on your property or in your home. Lights can turn on when we get home and the AC can direct the cold airflow toward you. The elderly can stay independent longer with sensors that notice when they need help. Traffic lights notice automatically when you arrive. In retail, customers can be counted for footfall analysis, and displays can show more detailed content when customers get closer to them. The Plumerai People Detection software supports indoor, outdoor, low light, and difficult backgrounds such as moving objects, and can detect at more than 20m / 65ft distance. The Plumerai People Detection runs completely at the edge and all computations are performed on the ESP32-S3. This means there is no internet connection needed, and the captured images never leave the device, increasing reliability and respecting privacy. In addition, performing the people detection task at the edge eliminates costly cloud compute. We are proud to offer a solution that enables more applications and products to benefit from our accurate people detection software.

Resources required on the ESP32-S3 MCU:

- Single core Xtensa LX7 at 240 MHz

- Latency: 303 ms (3.3 fps)

- Peak RAM usage: 166 KiB

- Binary size: 1568 KiB

Demo is readily available:

- Runs on ESP32-S3-EYE board

- OV2640 camera, 66.5° FOV

- 1.3” LCD 240x240 SPI-based display

- Runs FreeRTOS

- USB powered

The ESP32-S3 is a dual-core XTensa LX7 MCU, capable of running at 240 MHz. Apart from its 512 KB of internal SRAM, it also comes with integrated 2.4 GHz, 802.11 b/g/n Wi-Fi and Bluetooth 5 (LE) connectivity that provides long-range support. We extensively optimized our people detection AI software to take advantage of the ESP32-S3’s custom vector instructions in the MCU, providing a significant speedup compared to using the standard CPU’s instruction set. Plumerai People Detection needs only one core to run at more than 3 fps, enabling fast response times. The second core on the ESP32-S3 is available for additional tasks.

A demo is available that runs on the ESP32-S3-EYE board. The board includes a 2-Megapixel camera, and an LCD display, which provides a live display of the camera feed and the bounding boxes, showing where and when people in view are detected.

The ESP32-S3-EYE board is readily available from many electronics distributors. To get access to the demo application fill in your email address and refer to the ESP32 demo. You can find more info on how to use the demo in the Plumerai Docs.

Plumerai People Detection software is available for licensing from Plumerai.

Wednesday, November 9, 2022

MLPerf Tiny 1.0 confirms: Plumerai’s inference engine is again the world’s fastest

Earlier this year in April we presented our MLPerf Tiny 0.7 benchmark scores, showing that our inference engine runs your AI models faster than any other tool. Today, MLPerf released the Tiny 1.0 scores and Plumerai has done it again: we still have the world’s fastest inference engine for Arm Cortex-M architectures. Faster inferencing means you can run larger and more accurate AI models, go into sleep mode earlier to save power, and run them on smaller and lower cost MCUs. Our inference engine executes the AI model as-is and does no additional quantization, no binarization, no pruning, and no model compression. There is no accuracy loss. It simply runs faster and in a smaller memory footprint.

Here’s the overview of the MLPerf Tiny results for an Arm Cortex-M4 MCU:

| Vendor | Visual Wake Words | Image Classification | Keyword Spotting | Anomaly Detection |

|---|---|---|---|---|

| Plumerai | 208.6 ms | 173.2 ms | 71.7 ms | 5.6 ms |

| STMicroelectronics | 230.5 ms | 226.9 ms | 75.1 ms | 7.6 ms |

| 301.2 ms | 389.5 ms | 99.8 ms | 8.6 ms | |

| 336.5 ms | 389.2 ms | 144.0 ms | 11.7 ms |

Compared to TensorFlow Lite for Microcontrollers with Arm’s CMSIS-NN optimized kernels, we run 1.74x faster:

| Speed | Visual Wake Words | Image Classification | Keyword Spotting | Anomaly Detection | Speedup |

|---|---|---|---|---|---|

| 335.97 ms | 376.08 ms | 100.72 ms | 8.45 ms | ||

| 194.36 ms | 170.42 ms | 66.32 ms | 5.59 ms | ||

| 1.73x | 2.21x | 1.52x | 1.51x | 1.74x |

But not only latency is important. Since MCUs often have very limited memory on board it’s important that the inference engine uses minimal memory while executing the neural network. MLPerf Tiny does not report numbers for memory usage, but here are the memory savings we can achieve on the benchmarks compared to TensorFlow Lite for Microcontrollers. On average we use less than half the memory:

| Memory | Visual Wake Words | Image Classification | Keyword Spotting | Anomaly Detection | Reduction |

|---|---|---|---|---|---|

| 98.80 | 54.10 | 23.90 | 2.60 | ||

| 36.50 | 37.80 | 17.00 | 1.00 | ||

| 2.71x | 1.43x | 1.41x | 2.60x | 2.04x |

MLPerf doesn’t report code size, so again we compare against TensorFlow Lite for Microcontrollers. The table below shows that we reduce code size on average by a factor of 2.18x. Using our inference engine means you can use MCUs that have significantly smaller flash size.

| Code size | Visual Wake Words | Image Classification | Keyword Spotting | Anomaly Detection | Reduction |

|---|---|---|---|---|---|

| 209.60 | 116.40 | 126.20 | 67.20 | ||

| 89.20 | 48.30 | 46.10 | 54.20 | ||

| 2.35x | 2.41x | 2.74x | 1.24x | 2.18x |

Want to see how fast your models can run? You can submit them for free on our Plumerai Benchmark service. We email you the results in minutes.

Are you deploying AI on microcontrollers? Let’s talk.

Wednesday, November 2, 2022

World’s fastest inference engine now supports LSTM-based recurrent neural networks

At Plumerai we enable our customers to perform increasingly complex AI tasks on tiny embedded hardware. We recently observed that more and more of such tasks are using recurrent neural networks (RNNs), in particular RNNs using the long-short-term-memory (LSTM) cell architecture. Example uses of LSTMs are analyzing time-series data coming from sensors like IMUs or microphones, human activity recognition for fitness and health monitoring, detecting if a machine will break down, and speech recognition. This led to us optimizing and extending our support for LSTMs and today we are proud to announce that Plumerai’s deep learning inference software greatly outperforms existing solutions for LSTMs on microcontrollers for all metrics: speed, accuracy, RAM usage, and code size.

To demonstrate this, we selected four common LSTM-based recurrent neural networks and measured latency and memory usage. In the table below, we compare our inference software against the September 2022 version of TensorFlow Lite for Microcontrollers (TFLM for short) with CMSIS-NN enabled. We choose TFLM because it is freely available and widely used. Note that ST’s X-CUBE-AI also supports LSTMs, but that only runs on ST chips and works with 32-bit floating-point, making it much slower.

These are the networks used for testing:

- A simple LSTM model from the TensorFlow Keras RNN guide.

- A weather prediction model that performs time series data forecasting using an LSTM followed by a fully-connected layer.

- A text generation model using a Shakespeare dataset using an LSTM-based RNN with a text-embedding layer and a fully-connected layer.

- A bi-directional LSTM that uses context from both directions of the ’time’ axis, using a total of four individual LSTM layers.

| TFLM latency | Plumerai latency | TFLM RAM | Plumerai RAM | |

|---|---|---|---|---|

| Simple LSTM | 941.4 ms | 189.0 ms (5.0x faster) |

19.3 KiB | 14.3 KiB (1.4x lower) |

| Weather prediction | 27.5 ms | 9.2 ms (3.0x faster) |

4.1 KiB | 1.8 KiB (2.3x lower) |

| Text generation | 7366.0 ms | 1350.5 ms (5.5x faster) |

61.1 KiB | 51.6 KiB (1.2x lower) |

| Bi-directional LSTM | 61.5 ms | 15.1 ms (4.1x faster) |

12.8 KiB | 2.5 KiB (5.1x lower) |

Board: STM32L4R9AI with an Arm Cortex-M4 at 120 MHz with 640 KiB RAM and 2 MiB flash. Similar results were obtained using an Arm Cortex-M7 board.

In the above table we report latency and RAM, which are the most important metrics for most users. The faster you can execute a model, the faster the system can go to sleep, saving power. Microcontrollers are also very memory constrained, making thrifty memory usage crucial. In many cases code size (ROM usage) is also important, and again there we outperform TFLM by a large margin. For example, Plumerai’s implementation of the weather prediction model uses 48 KiB including weights and support code, whereas TFLM uses 120 KiB.

The table above does not report accuracy, because accuracy is not changed by our inference engine. It performs the same computations as TFLM without extra quantization or pruning. Just like TFLM, our inference engine does internal LSTM computations in 16 bits instead of 8 bits to maintain accuracy.

In this blog post we highlight the LSTM feature of Plumerai’s inference software. However, other neural networks are also well supported, very fast, and low on RAM and ROM consumption, without losing accuracy. See our MobileNet blog post or the MLPerf blog post for examples and try out our inference software with your own model.

Besides Arm Cortex-M0/M0+/M4/M7/M33, we also optimize our inference software for Arm Cortex-A, ARC EM, and RISC-V architectures. Get in touch if you want to use the world’s fastest inference software with LSTM support on your embedded device.

Friday, September 30, 2022

Plumerai’s people detection powers Quividi’s audience measurement platform

We’re happy to report that Quividi adopted our people detection solution for its audience measurement platform. Quividi is a world leader in the domain of measuring audiences for digital displays and retail media. They have over 600 customers analyzing billions of shoppers every month, across tens of thousands of screens.

Quividi’s platform measures consumer engagement in all types of venues, outside, inside, and in-store. Shopping malls, vending machines, bus stops, kiosks, digital merchandising displays and retail media screens measure audience impressions, enabling monetization of the screens and leveraging shopper engagement data to drive sales up. The camera-based people detection is fully anonymous and compliant with privacy laws, since no images are recorded or transmitted.

With the integration of Plumerai’s people detection, Quividi expands the range of its platform capabilities, since our tiny and fast AI software runs seamlessly on any Arm Cortex-A processor, instead of on costly and power-hungry hardware. Building a tiny and accurate people detection solution takes time: we collect and curate our own data, design and train our own model architectures with over 30 million images, and then run them on off-the-shelf Arm CPUs using our world’s fastest inference engine software.

With the integration of Plumerai’s people detection, Quividi expands the range of its platform capabilities, since our tiny and fast AI software runs seamlessly on any Arm Cortex-A processor, instead of on costly and power-hungry hardware. Building a tiny and accurate people detection solution takes time: we collect and curate our own data, design and train our own model architectures with over 30 million images, and then run them on off-the-shelf Arm CPUs using our world’s fastest inference engine software.

Besides measuring audiences, people detection can really enhance the user experience in many other markets: tiny smart cameras that alert you when there is someone in your backyard, webcams that ensure you’re always optimally in view, or air conditioners and smart lights that turn off automatically when you leave the room are just a few examples.

More information on our people detection can be found here.

Contact us to evaluate our solution and learn how to integrate it in your product.

Friday, May 13, 2022

tinyML Summit 2022: ‘Tiny models with big appetites: cultivating the perfect data diet'

Although lots of research effort goes into developing small model architectures for computer vision, real gains cannot be made without focusing on the data pipeline. We already mentioned the importance of quality data and some pitfalls of public datasets in an earlier blog post, and have been further improving our data tooling a lot since then to make our data processes even more powerful. We have incorporated several machine learning techniques that enable us to curate our datasets at deep learning scale, for example by identifying images that contributed strongly to a specific false prediction during training.

In this recording of his talk at tinyML Summit 2022, Jelmer demonstrates how our fully in-house data tooling leverages these techniques to curate millions of images, and describes several other essential parts of our model and data pipelines. Together, these allow us to build accurate person detection models that run on the tiniest edge devices.

Friday, May 6, 2022

MLPerf Tiny benchmark shows Plumerai's inference engine on top for Cortex-M

We recently announced Plumerai’s participation in MLPerf Tiny, the best-known public benchmark suite for evaluation of machine learning inference tools and methods. In the latest v0.7 of the MLPerf Tiny results, we participated along with 7 other companies. The published results confirm the claims that we made earlier on our blog: our inference engine is indeed the world’s fastest on Arm Cortex-M microcontrollers. This has now been validated and tested using standardized methods and reviewed by third parties. And what’s more, everything was also externally certified for correctness by evaluating model accuracy on four representative neural networks and applications from the domain: anomaly detection, image classification, keyword spotting, and visual wake words. In addition, our inference engine is also very memory efficient and works well on Cortex-M devices from all major vendors.

| Visual Wake Words | Image Classification | Keyword Spotting | Anomaly Detection | |